What is user research in UX design?

You've built something technically sophisticated. The technology works. The pilot results are promising. But when real users sit down with your product, they're lost within minutes — not because the technology fails, but because the experience was built around the team's internal logic rather than how users actually think and work.

This is a pattern that appears frequently in climate tech and deep-tech products. The engineering is sound, but the user experience reflects the builder's mental model, not the buyer's. For companies selling complex tools to utilities, procurement teams, or enterprise buyers with slow approval cycles, that gap doesn't just create friction — it extends sales cycles and stalls adoption before the technology ever gets a fair evaluation.

User research is the method that closes this gap. It's the systematic study of how target users behave, what motivates them, and where they struggle, providing evidence to drive design decisions instead of internal assumptions.

This guide covers the core methods, the step-by-step research process, common mistakes to avoid, and the tools practitioners actually use. Whether you're a technical founder building your first product experience or a product lead at a Series A company refining an existing flow, what follows will help you make research a consistent part of how decisions get made.

TLDR:

- User research replaces internal assumptions with evidence from actual users

- Every $1 invested in UX returns $100 in measurable outcomes, per Forrester research

- Qualitative methods reveal the "why" behind behavior; quantitative methods confirm how widespread a problem is

- Five users are enough for qualitative discovery; 30+ are needed for statistical significance

- Research only delivers value when findings connect directly to product and business decisions

Why user research matters in UX design

The ROI case for user research is well-documented, and the numbers are significant enough to treat it as a core part of the product development process rather than a later-stage refinement.

Exceptional return on investment

According to Forrester research, every dollar invested in UX brings $100 in return, translating to a 9,900% ROI. An analysis of 42 website redesigns showed usability metrics increased by 135% on average following user research activities, with specific improvements including:

- Sales and conversion rates: 100% increase

- Traffic and visitor counts: 150% increase

- User task performance: 161% increase

The 1:10:100 rule of cost savings

Research dramatically reduces expenses by catching problems early. Nielsen Norman Group found that fixing a usability issue during design costs 10 times less than fixing it during development, and 100 times less than fixing it post-launch.

This cost difference is the core argument for shifting research earlier in the product cycle. The later a design problem is discovered, the more embedded it is in the codebase, the product architecture, and user expectations. Consider what that means in practice: a deep-tech company discovers — three months post-launch — that energy operators can't locate the manual override controls in their grid monitoring tool. Fixing that navigation flaw at that stage requires a development sprint, a re-training process, and a credibility problem with a utility buyer whose procurement team is watching the rollout closely. Caught in a prototype session before development, the same issue is an afternoon's design work. Research that runs early doesn't just reduce costs — it protects deals that are already in progress.

Risk reduction and product success

Research mitigates the risk of building products that miss the mark. A B2B site case study demonstrated that research-driven information architecture changes led to an 85% increase in product findability.

This improvement directly drove significant revenue and lead generation increases. For technical products where buyers need to self-educate before engaging sales, findability is directly connected to pipeline volume.

Competitive advantage in crowded markets

Companies that conduct regular user research consistently outperform competitors in conversion and retention metrics. In technical B2B markets, embedding UX into product development accelerates time-to-market for new features, reduces customer churn, and creates measurable distance from engineering-led competitors.

In climate tech and deep-tech specifically, this distinction carries real weight. Many companies in these sectors compete against incumbents with decades of installed base and buyer familiarity. A product that's easier to evaluate, easier to onboard, and easier to use within the buyer's existing workflow can close gaps in brand equity that marketing alone cannot bridge.

Building team empathy and alignment

Beyond metrics, research builds empathy within teams by exposing everyone to real user struggles and motivations. When developers and stakeholders watch real users struggle with specific parts of a product, those observations carry more weight in prioritization conversations than any design brief could.

This shared understanding helps your team make decisions anchored in user behavior rather than internal debate, reducing the friction that comes from misaligned assumptions about what users actually need. That shared context has a direct commercial benefit too: when your whole team has watched the same procurement manager or operator struggle with a specific workflow, the fix gets prioritized — and a product that's been visibly improved based on buyer feedback carries more weight in enterprise evaluations than one that hasn't been through that process.

Types of user research: qualitative vs. quantitative

Understanding the difference between qualitative and quantitative research is fundamental to choosing the right approach.

Qualitative research: understanding the "why"

Qualitative research focuses on direct assessment of usability through observational findings. It identifies which design features are easy or hard to use and, critically, reveals why users behave certain ways.

Qualitative methods include user interviews that surface motivations and mental models, usability testing with think-aloud protocols, field studies that observe users in their natural environments, and ethnographic research that accounts for cultural and contextual factors.

Key characteristics:

- Small sample sizes (typically 5-6 users per user group)

- Rich, descriptive data about user experiences

- Answers "why" and "how to fix" questions

- You use this formatively during design to identify and solve problems

The insights qualitative research surfaces also shape how you talk about your product in sales conversations. When you understand the mental models and language your buyers bring to an evaluation, you can position directly against their actual concerns — not the ones you assumed they had.

Quantitative research: measuring the "what" and "how many"

Quantitative research gathers data indirectly through measurement and instruments, producing numerical metrics that can be statistically analyzed.

Common approaches include large-scale surveys measuring attitudes, analytics platforms tracking behavioral patterns, A/B tests comparing design variants, and benchmark usability testing run under controlled conditions.

Quantitative research requires large sample sizes (30+ users for statistical significance), produces numerical data that enables trend analysis, and answers "how many" and "how much" questions rather than "why." You use this summatively after launch to evaluate performance. When a procurement team or technical evaluator asks for evidence that your product performs as claimed, quantitative benchmarks are what give your answer credibility.

The complementary relationship

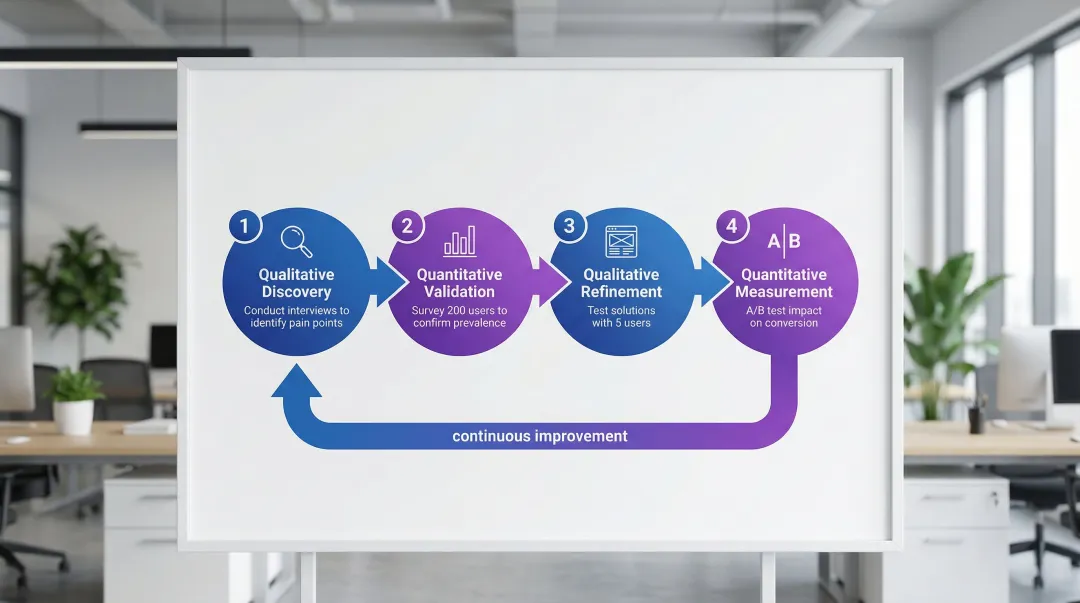

The most effective research strategies combine both approaches in what's called mixed-methods research.

Qualitative methods uncover insights and identify problems. Quantitative methods validate those insights at scale and measure their magnitude.

- Qualitative discovery: Conduct interviews to identify pain points

- Quantitative validation: Survey 200 users to confirm prevalence

- Qualitative refinement: Test solutions with 5 users

- Quantitative measurement: A/B test impact on conversion

This combination is what allows you to move from "we think users struggle with X" to "we observed 6 users struggling with X, and 78% of survey respondents reported the same issue." That level of specificity is what gets research findings acted on in product planning sessions.

Essential user research methods

Each research question calls for a different method. For technical products with complex user workflows — the kind common in climate tech, energy software, and industrial applications — the choice of method matters more than it might in simpler consumer contexts. Buyers in these categories bring domain expertise of their own, and research needs to meet them at that level of specificity.

User interviews

One-on-one conversations that generate new knowledge about user experiences, needs, and pain points. User interviews explore motivations, frustrations, and mental models that can't be observed directly.

Treat each session as a formal research study rather than a casual conversation. Open-ended questions — "Tell me about the last time you..." — surface richer responses than yes/no formats. Avoid leading questions that suggest desired answers, and aim for the 80/20 ratio: listen far more than you speak.

Best for: Discovery and empathize stages when you need to explore user problems before designing solutions. The language and mental models you capture in interviews also sharpen your sales conversations — when you understand precisely how your buyer describes their problem, you can position your product against it with specificity that's hard to achieve through guesswork.

Usability testing

Participants perform specific tasks while you observe, revealing friction points and confusion in real-time.

Qualitative usability testing:

- Use "think-aloud" protocol where users speak their thoughts

- Test with 5 users to find approximately 85% of issues

- Focus on behavior over opinion

- Identify specific problems to fix

Quantitative usability testing:

- Strictly controlled conditions without think-aloud (it slows users down)

- Requires 30+ users for statistical significance

- Captures metrics like task completion rates and times

- Benchmarks performance against competitors or previous versions

Use when: Design and test stages to identify and fix friction during iterative development, or post-launch to measure performance. Usability data also gives you something concrete to show enterprise evaluators: not just claims about ease of use, but evidence that real users in comparable roles completed specific tasks without needing support.

Surveys

Quantitative measures of attitudes through closed-ended questions that gather feedback at scale.

Keep surveys short to maintain completion rates. Use them for categorizing attitudes or collecting self-reported data, and combine rating scales with optional open-ended questions where you need qualitative context. Prioritize only essential questions to avoid survey fatigue — the longer the survey, the lower the completion rate and the less reliable the final responses.

Ideal for: When you need to validate hypotheses with large samples or track satisfaction metrics over time. For B2B products with long sales cycles — common in energy, utilities, and industrial software — surveys can also help identify which aspects of the product experience are influencing renewal decisions before those conversations happen.

Field studies and ethnography

While surveys capture attitudes at scale, field studies reveal what users actually do in context. Observing users in their natural environment uncovers real-world workflows and friction points.

Minimize interference to capture authentic behavior, and combine direct observation with contextual interviews when the opportunity arises. Document environmental factors that affect how the product is used. Pay particular attention to workarounds — improvised solutions users create when the product doesn't meet their needs — which often signal unmet requirements worth addressing in the design.

Best for: Discovery and strategize stages to find unmet needs and opportunities in users' actual contexts. The workarounds you document during field studies are often your most persuasive commercial asset — they surface the specific gaps in incumbent tools that your target buyers have learned to live with.

Card sorting

Users organize information items into groups, revealing their mental models and informing information architecture.

Types: Open card sorting lets participants create their own category names. Closed card sorting asks them to organize items into predefined categories.

Best practices: Test with 15-30 users for stable structure, use the results for navigation and content hierarchy decisions, and analyze patterns in groupings rather than individual responses.

Use during: Explore and design stages when structuring navigation or content hierarchies. Well-structured navigation informed by your users' mental models directly affects whether a prospective buyer can self-educate through your product during an evaluation — before they've spoken to anyone on your sales team.

A/B testing

Randomly assigning users to different design variants to scientifically measure which performs better.

Best practices: Test one variable at a time for clear causation, ensure you have sufficient live traffic for statistical significance, run tests long enough to account for weekly patterns, and measure business metrics rather than just clicks.

Ideal for: Launch and optimization stages to validate design choices and improve conversion with live users. The data A/B testing produces — specific percentage lifts in trial-to-paid conversion or feature adoption — carries weight in internal review processes where multiple stakeholders need to sign off on a buying decision.

The user research process: step-by-step

Effective research follows a systematic process that transforms questions into actionable insights.

1. Define research goals and questions

Start by articulating what you need to learn and why it matters.

Condense stakeholder concerns into clear problem statements like "Users cannot find product instructions" rather than vague goals like "improve the experience."

Seven-step method:

- Determine important user tasks

- Discover system aspects of concern to stakeholders

- Group and prioritize issues

- Create specific problem statements

- List research goals for each statement

- Identify participant activities to observe

- Write realistic user scenarios

Connecting each research goal to a specific commercial outcome — a pilot conversion, a renewal decision, a reduction in onboarding time — also makes it easier to report back concretely on what changed as a result of the research.

2. Choose appropriate research methods

Select methods based on your research questions, timeline, resources, and product development stage.

By development stage:

- Strategize (discovery): Field studies and interviews to find opportunities

- Design (explore/test): Card sorting and usability testing to improve designs

- Launch (assess): Benchmarking, A/B tests, and analytics to measure performance

Resource considerations:

- Limited timeline: Expert reviews, quick interviews, unmoderated testing

- Limited budget: Guerrilla testing, online surveys, existing analytics

- Comprehensive resources: Mixed-methods combining qualitative and quantitative

The method you choose also affects how defensible your findings are when stakeholders push back on design decisions. Data from controlled usability tests is harder to dismiss than team intuition — and that distinction matters when a sales conversation is driving a product prioritization debate.

3. Recruit participants

Find and screen users who represent your target audience. Participant quality directly impacts your findings.

Sample size recommendations:

| Method | Sample size | Rationale |

|---|---|---|

| Qualitative usability testing | 5 users per group | Uncovers approximately 85% of problems; diminishing returns beyond 5 |

| User interviews | 5-6 users initially | Continue until saturation (no new themes) |

| Quantitative usability | 30+ users | Required for statistical significance |

| Card sorting | 15-30 users | Needed for stable information architecture |

Recruiting best practices: Match participants on relevant characteristics like behavior and usage frequency, not just demographics. Use screener surveys to filter for specific traits, overrecruit users with accessibility needs for inclusive design, and compensate everyone fairly for their time. For B2B products in regulated or enterprise markets, recruiting participants who match your actual buyers — by role and decision-making context — means your research findings will hold up when a procurement team questions whether the product was validated with users like them.

4. Conduct research sessions

Run your research plan while minimizing bias and maintaining rigor.

Facilitation guidance:

- Create comfortable environments where participants feel safe being honest

- Avoid leading questions that suggest desired answers

- Use neutral prompts: "What do you think about this?" instead of "Don't you think this is clear?"

- Observe behavior, not just what participants say they would do

- Take detailed notes or record sessions (with permission)

The three biases most likely to compromise your sessions are confirmation bias (seeking only evidence that supports existing beliefs), social desirability bias (participants answering to please you rather than tell the truth), and leading (influencing responses through how you phrase questions). Sessions that surface genuine friction — rather than confirming what you already believe — give you the specific evidence you need to address issues before your product reaches a high-stakes enterprise evaluation.

5. Analyze and synthesize findings

Once you've completed your sessions, transform raw data into meaningful insights and prioritized recommendations.

Analysis techniques:

- Affinity diagramming: Collaboratively cluster findings to identify patterns

- Thematic analysis: Systematically identify recurring themes across qualitative data

- Statistical analysis: Calculate metrics like task success rates, time-on-task, and satisfaction scores

Synthesis process: Identify patterns across multiple participants rather than individual outliers, develop insights that explain why those patterns exist, prioritize recommendations by impact and implementation effort, and link findings to business outcomes like revenue, retention, and support costs.

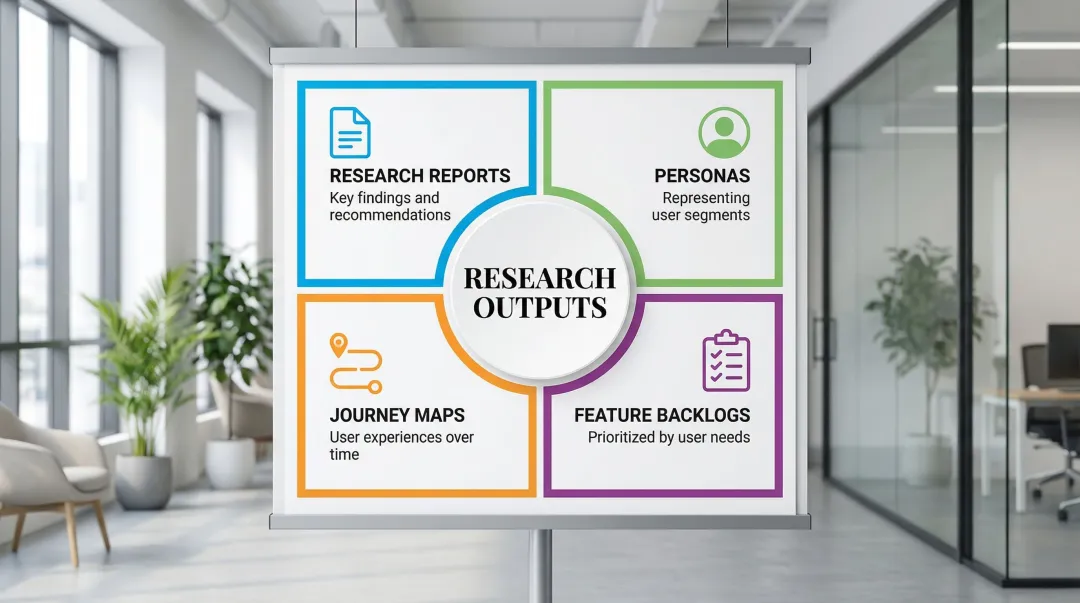

Deliverables:

- Research reports with key findings and recommendations

- Personas representing user segments

- Journey maps showing user experiences over time

- Prioritized feature backlogs based on user needs

Research only creates commercial value when findings connect directly to product decisions that get implemented. Build your deliverables to answer a specific question that a product or commercial decision depends on.

Common user research mistakes to avoid

Even experienced researchers make these mistakes. Knowing them in advance won't make you immune, but it will help you catch them earlier.

Leading questions and confirmation bias

Leading questions prompt specific answers, skewing your data. "What do you think this button does?" implies it does something specific, causing users to guess rather than respond naturally.

- ❌ Leading: "How much do you love this feature?"

- ✅ Neutral: "What's your reaction to this feature?"

- ❌ Leading: "Was this process easy?"

- ✅ Neutral: "How would you describe that process?"

Confirmation bias occurs when researchers value information confirming existing beliefs while dismissing contradictory evidence. If you only highlight users who preferred your design while ignoring those who struggled, you're falling into this trap.

In practice, confirmation bias is particularly hard to catch because it often feels like pattern recognition rather than bias. When you've worked on a product for months, you develop strong intuitions about what works — and those intuitions will unconsciously direct your attention during sessions toward observations that confirm them. A useful check: after each session, write down two or three things the participant did that surprised you or contradicted your expectations. If you can't find any, you may not be looking hard enough.

To avoid these biases, write your questions in advance and review them for leading language, have a colleague critique your discussion guide before the session, actively seek disconfirming evidence while you're in the room, and report all findings — not just those that support your hypothesis. Biased research produces a false sense of confidence — one that often surfaces at the worst moment, when an enterprise buyer runs their own evaluation and finds friction your internal testing didn't catch.

Researching the wrong users or too small a sample

Beyond avoiding biased questions, you need the right participants. The "5-user myth" applies to qualitative usability discovery, not to quantitative studies, interviews, or statistical benchmarking. Using 5 users for quantitative metrics produces unreliable results because the margin of error at that sample size is too high to draw conclusions about broader user populations.

The recruit participants section above covers specific sample size requirements by method. The practical point here is that context matters: 5 users can reveal most usability issues in a qualitative session, but that same number tells you almost nothing in a benchmark study or survey.

For B2B products in technical industries, there's a specific version of this mistake worth naming: recruiting users who are accessible rather than users who are representative. The engineer on your advisory board, the friendly contact at a pilot customer, the product manager at a company that's already bought in — these people are easy to reach, but they're not typical users. They understand your product's context, tolerate more complexity, and are often invested in your success. Research with them will produce feedback that's more forgiving than what an average evaluator would give — which means you'll systematically underestimate the friction real users encounter.

Recruit based on relevant behaviors, not just demographics. Include users with varying skill levels and accessibility needs. Avoid convenience sampling (friends, coworkers) unless they genuinely match your target audience.

Confusing what users say with what they do

Users are generally poor predictors of their own behavior. In interviews and surveys, they describe an idealized version of their workflow — the way they'd like to work, or the way they think they should work. Observation-based methods frequently tell a different story.

A user might report that they always review documentation before using a new feature. Analytics show most skip it entirely. A user might say they want more data density in a dashboard. What you observe is that they rarely scroll past the first screen.

This is one reason combining qualitative and quantitative methods matters — not just for statistical validation, but because each approach catches different types of error. Interviews and surveys capture stated behavior. Usability testing and analytics capture actual behavior. When these diverge, the observed data is almost always more reliable.

The practical implication: treat what users tell you they want as a hypothesis to test, not a brief to execute. Design based on observed struggles, not stated preferences. This gap between stated and observed behavior tends to become most visible at the pilot stage, when users who aren't invested in your success encounter the product under realistic conditions — and behave differently from what your interviews predicted.

Research without action

The most common failure mode is conducting research that never influences decisions. Studies fail to demonstrate impact when insights sit in reports without post-implementation tracking.

To ensure your research drives decisions:

- Tie research goals to specific decisions upfront

- Present findings to decision-makers, not just designers

- Create actionable recommendations, not just observations

- Track metrics after implementing changes to prove impact

- Build a measurement plan showing how you'll evaluate outcomes

The practical standard: research is complete only when you can point to a metric that changed after implementation. Task completion rate, time-on-task, support ticket volume, trial-to-paid conversion — whatever your product's relevant indicators are. Without that loop closed, the work remains a hypothesis.

Tools and resources for user research

Rather than an exhaustive directory, what follows is what actually works in practice — particularly for technical B2B product teams running research without a dedicated research operations function. The short recommendation: start with the minimum stack that covers recruitment, analysis, and one testing method. Add specialized tools only when you have a clear gap that justifies the overhead of maintaining another platform.

Research and recruiting platforms

UserTesting: Unmoderated and moderated usability testing with think-aloud research. Access diverse participant panels for quick feedback on prototypes and live products. Useful for getting directional feedback quickly — though if you need tight control over participant profiles for a specialized B2B persona, panel quality can be inconsistent. Better suited to initial discovery rounds than high-stakes validation studies with specific user criteria.

User Interviews: Participant recruitment from a 6M+ panel. Key features:

- Screener surveys with logic branching

- Automated scheduling and calendar sync

- Integrated incentive distribution

- The go-to for B2B recruiting when you need engineers, procurement managers, or other professional personas that are difficult to source through general panels

dscout: Mobile diary studies capturing in-context moments over days or weeks. Participants record video responses in their natural environment — one of the few tools that can capture authentic use patterns rather than lab behavior. Worth using when you suspect research sessions aren't reflecting how users actually work day-to-day, or when the product is embedded in a physical workflow that's hard to replicate in a standard session.

Analysis and collaboration tools

Dovetail: Centralized research repository storing transcripts, tags, and insights. If your team runs research regularly and struggles to track what's already been learned, Dovetail solves the institutional memory problem. Teams can search across 100+ interviews to surface patterns and avoid duplicating research that's already been done.

Miro: Digital whiteboard for affinity diagramming and synthesis workshops. Works especially well when you want non-researchers — developers, product managers, founders — to participate in synthesis rather than just receive a finished report. Shared synthesis sessions tend to produce faster buy-in on findings than a slide deck delivered after the fact.

Airtable: Flexible database for tracking participants across multiple studies. Create custom views to filter by demographics, track incentive payments, and link interview recordings to participant profiles.

Prototyping and testing tools

Figma: Industry-standard design tool for creating interactive prototypes. Real-time collaboration lets designers iterate during user sessions based on immediate feedback.

Maze: Unmoderated usability testing with quantitative metrics:

- Heatmaps showing where users click

- Task success rates and completion times

- Misclick analysis and user paths

- Direct integration with Figma prototypes

The quantitative output is useful for building the case for design changes in organizations that require data-backed decisions, and for benchmarking improvements across design iterations.

UsabilityHub: Rapid testing for early-stage concepts. Run five-second tests, first-click tests, and preference tests to validate designs before building prototypes. Results are typically available within hours — useful for quick directional checks before committing to a design direction.

Putting research into practice

The methods and tools above are well-established. The harder part is building a research practice within a team that's moving fast, often without a dedicated researcher on staff. For technical founding teams at early-stage companies, the temptation is to treat user research as something to add later, once the product is more mature.

That's typically when the friction becomes hardest to reverse. Assumptions get embedded in the architecture. Workflows get built around internal logic rather than user behavior. The cost to correct them climbs as the codebase grows.

At What if Design, we work with technical founders and product teams to establish research-informed design processes from early stages — particularly for climate tech and deep-tech products where user workflows are complex and the cost of a poor product experience shows up in slow adoption, not just support tickets. If your product experience hasn't been tested with real users at your current stage, that's a concrete starting point worth addressing.

Frequently asked questions

What is UX design and research?

UX design covers all aspects of user interaction with products and services, from interface to overall experience. UX research systematically studies users through qualitative and quantitative methods to understand behaviors, needs, and motivations that inform design decisions.

How do UX designers do user research?

Designers conduct research through interviews (exploring user needs), usability tests (observing task completion), and surveys (measuring attitudes at scale). Methods depend on research questions, timeline, and product development stage.

What is the 80/20 rule in UX?

The Pareto Principle in UX suggests that 80% of users typically use 20% of features. Research helps identify which features matter most so you can prioritize development resources accordingly. Rather than building everything, focus on the features users rely on most and optimize those for the greatest impact on satisfaction and engagement.

What are the 4 C's of UX design?

The 4 C's framework defines consistency (predictable patterns across the interface), continuity (seamless transitions across devices and sessions), context (adapting presentation and function to the user's current situation), and complementary (using each platform's native strengths rather than fighting them). User research is what tells you whether your product is actually delivering on these principles for your specific users in their specific contexts, rather than assuming the design intent translated into real-world experience.