What separates UX experts from everyone else

Your product works. Your users are adopting it. But somewhere between the demo and the dashboard, people are slowing down, dropping off, or calling your support team for answers that should be obvious from the interface.

This is rarely a feature problem. It's a design thinking problem. Most product teams working in climate tech, energy software, or deep-tech build fast and iterate faster, which means UX decisions get made on instinct rather than evidence. The result is onboarding that hasn't scaled, flows that feel bolted together, and interfaces that make sense to the people who built them but confuse everyone else.

Here's what actually separates expert-level UX work from everything else: the ability to connect research to decisions, decisions to behavior, and behavior to outcomes your business can measure. This article breaks down six areas where that difference shows up most clearly, and what you can do about each one.

TLDR: the core principles of expert UX work

- Ask "why" questions to reveal underlying motivations, not just "what" questions that capture surface behaviors

- Develop genuine empathy through direct user contact, not just personas built in a workshop

- Connect every design decision to measurable outcomes: activation rates, retention, support ticket reduction

- Know when to follow best practices and when to deviate, based on evidence rather than instinct

Research: experts ask better questions

Why questions outperform what questions

Expert UX designers frame research around "why" questions that reveal underlying motivations rather than "what" questions that only capture behaviors. While quantitative data shows what users are doing (where they click, how long they stay, where they drop off), qualitative research uncovers why they behave that way, and that distinction is what prevents costly development mistakes.

Consider checkout abandonment: analytics might show a 21% drop-off rate, but only qualitative research reveals the specific reason. In one well-documented case, users couldn't see total costs upfront. That "why" insight directly informs the solution: transparent pricing display. Without understanding the motivation behind the behavior, you risk implementing fixes that address the symptom rather than the cause. For a technical founder in an enterprise evaluation, that distinction is critical: a fix that addresses the symptom might clear your internal QA but fail the usability review a procurement team runs before signing off.

Strategic research planning

Top designers know exactly what they need to learn before starting any research activity. They design studies that eliminate bias and focus on actionable insights rather than interesting but inconclusive findings.

This planning discipline means defining specific research questions tied to design decisions, selecting methods based on what you need to learn, creating discussion guides that surface honest feedback, and recruiting participants who represent your actual users — not whoever is available.

This approach plays out differently depending on the domain. In climate tech and energy software, the user population is often narrow and hard to recruit: a utility operations manager, an enterprise sustainability director, or a field technician with fifteen minutes between site visits. Research planning has to account for these constraints from the start, or you end up with findings that don't reflect how the product actually gets used.

Take a carbon accounting platform that spent two weeks scoping research before discovering that their target users — sustainability managers at large manufacturers — were only reachable in fifteen-minute windows and primarily accessed the product on mobile. Planning for those constraints upfront reshaped both the research approach and the roadmap before a single screen was designed, and avoided a three-month delay that would otherwise have surfaced during the buyer pilot.

Synthesis over reporting

Junior designers often report what users said. Top designers synthesize research findings into actionable insights, connecting patterns across multiple data sources and looking for the underlying themes that explain behavior rather than cataloguing individual quotes.

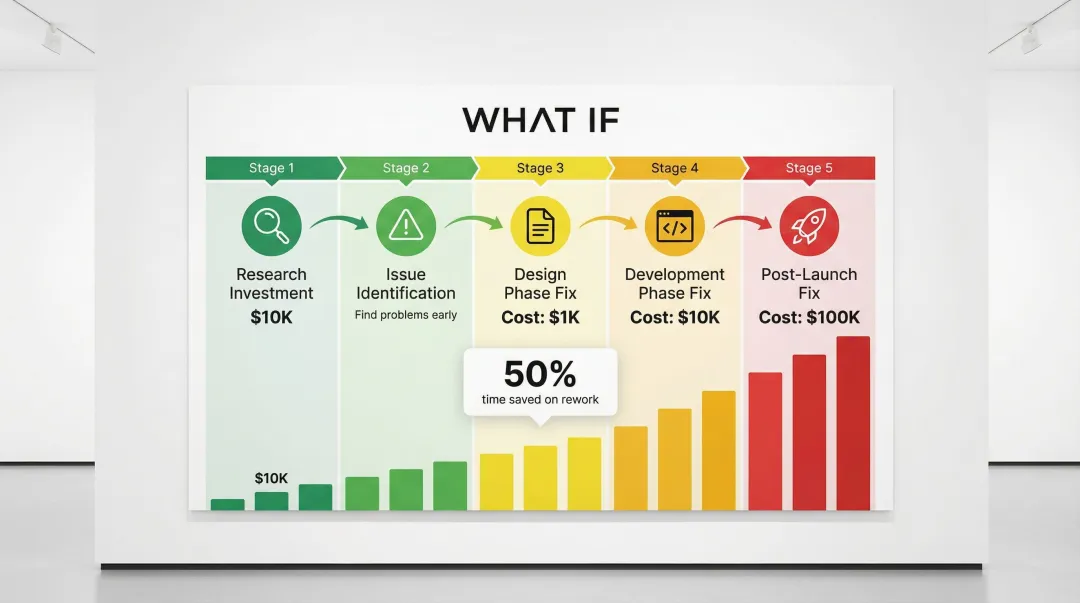

Research-driven design yields 10x to 100x ROI by preventing expensive rework. Developers spend approximately 50% of their time on avoidable rework, and fixing errors after development costs 100 times more than addressing them during design. Expert synthesis catches these issues early, when they're cheapest to fix. In a competitive evaluation, the ability to point to research-backed design decisions — rather than instinct — is also what separates vendors that make it through procurement review from those that don't.

Continuous validation

Top designers validate assumptions continuously throughout the design process, not just at the beginning. For climate tech and energy software teams, this matters more than in consumer products because your users are often utilities managers, procurement leads, or field technicians who won't tell you something is confusing unless you watch them use it. Lightweight research methods that work within these constraints include quick moderated usability sessions with 3 to 5 representative users, remote unmoderated studies that return results within days, and short follow-up interviews after onboarding milestones.

This approach prevents the common mistake of conducting research once and then designing for months based on assumptions that have since shifted. Markets change, user expectations evolve, and the regulatory context your product sits inside can shift significantly between research sprints. Validation checkpoints catch these shifts before they derail projects — and in our experience with energy software teams, the research done at project kick-off becomes outdated faster than anyone expects, because the market and regulatory context move quickly.

One energy software team running quarterly validation checkpoints caught mid-project that their primary users had adopted a new interface standard that changed their data input expectations entirely. Without that checkpoint, the disconnect would have surfaced during customer acceptance testing — at exactly the point in your sales cycle when you can least afford a delay.

Empathy: moving beyond user personas

Direct contact over fictional profiles

Expert UX designers go beyond creating personas to developing genuine empathy through direct user contact and immersive research methods. Personas serve as useful communication tools, but they're abstractions that can oversimplify complex human behavior. Real empathy comes from watching users struggle with your product in real time, hearing the frustration in their voices, and understanding the context they're operating in when they use it.

Contextual inquiry, which means observing users in their actual environment rather than a lab, provides breakthroughs that structured interviews rarely do. Users frequently leave out critical details when summarizing their own processes. Observing them in context reveals reasoning, mental models, and workarounds in real time. In one case, field studies led to a 50% reduction in project scope by clarifying exactly what was needed, resulting in a 300% ROI for the time spent on research.

If you're building grid management tools, carbon accounting software, or clean energy infrastructure products, contextual inquiry will often reveal that the stated workflow and the actual workflow are very different. In our work with climate tech clients, this gap is almost always larger than the team expects — the process described in a kickoff meeting rarely survives contact with the person actually operating the tool day-to-day. Users in regulated industries have workarounds, compliance requirements, and institutional habits that no interview script will surface on its own. When that gap surfaces during a pilot review rather than in your research, it becomes a credibility problem that's hard to recover from.

Balancing user needs with business reality

Experts balance user needs with business constraints and technical feasibility. They don't advocate blindly for users; they find solutions that work for both sides. Anyone can say "users want this feature," but experts ask:

- What business value does this feature create?

- What's the development cost versus expected return?

- Does this align with our strategic direction?

- What are we not building if we prioritize this?

The most effective approach is to treat user research and business constraints as inputs to the same problem. When a feature request comes in, the question isn't "should we build this for users" but "what outcome does this create, for whom, and at what cost relative to the alternatives." This discipline also signals product maturity to enterprise buyers: teams that evaluate features through a business lens — rather than just building what users ask for — tend to earn more trust during technical evaluations and pilot reviews.

Walking in user shoes

Experts practice walking in user shoes through techniques like contextual inquiry, shadowing, and experiencing the product as different user types would. This immersive approach reveals friction points that users might not articulate in interviews.

Case study: Baileigh Industrial

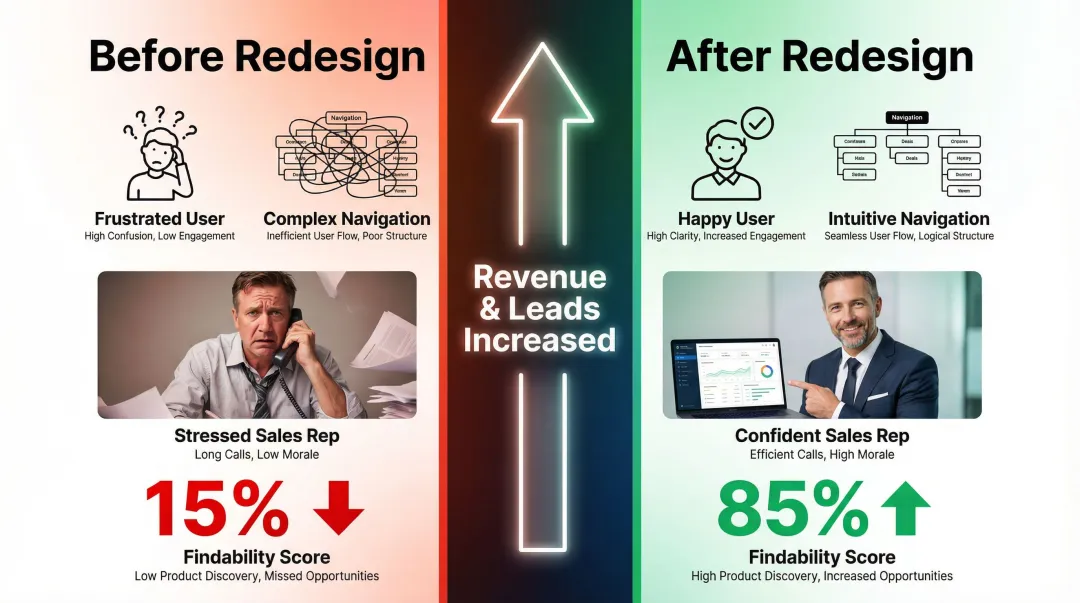

A manufacturing equipment company faced a concrete problem: sales representatives were flooded with simple inquiries because customers couldn't find information on the website. Qualitative interviews with sales reps and usability testing revealed that the site's navigation structure was the critical barrier, not the content itself.

A research-driven overhaul of the information architecture resulted in an 85% improvement in findability scores and substantial increases in revenue and leads. The parallel for your product is direct: if a buyer can't find the information they need during evaluation, your sales team absorbs that friction — in extended cycles, extra calls, and deals that stall without a clear reason.

Designing for edge cases and accessibility

Experts identify and design for edge cases and accessibility needs, not just the average user. In climate tech and energy software, edge cases are often the rule rather than the exception. A grid monitoring tool might need to work in low-bandwidth environments. A carbon accounting platform might need to handle users uploading data from multiple subsidiary systems with inconsistent formatting. A solar installation app might be used outdoors in direct sunlight by someone wearing work gloves.

According to the World Health Organization, approximately 15% of the global population experiences some form of disability. Designing for this segment improves usability for everyone, not just those with permanent disabilities. Accessibility improvements also reduce legal risk and, in regulated industries, can be a procurement requirement. Experts recognize that edge cases often reveal design weaknesses that affect all users to varying degrees.

Business impact: tying UX to metrics that matter

Speaking the language of business

Expert UX designers speak the language of business: conversion rates, retention, customer lifetime value, support ticket reduction. Not just "better user experience." Strategic designers distinguish themselves by translating user needs into business outcomes. Executives don't fund projects to improve usability; they invest in initiatives that drive revenue, reduce costs, or create competitive advantages.

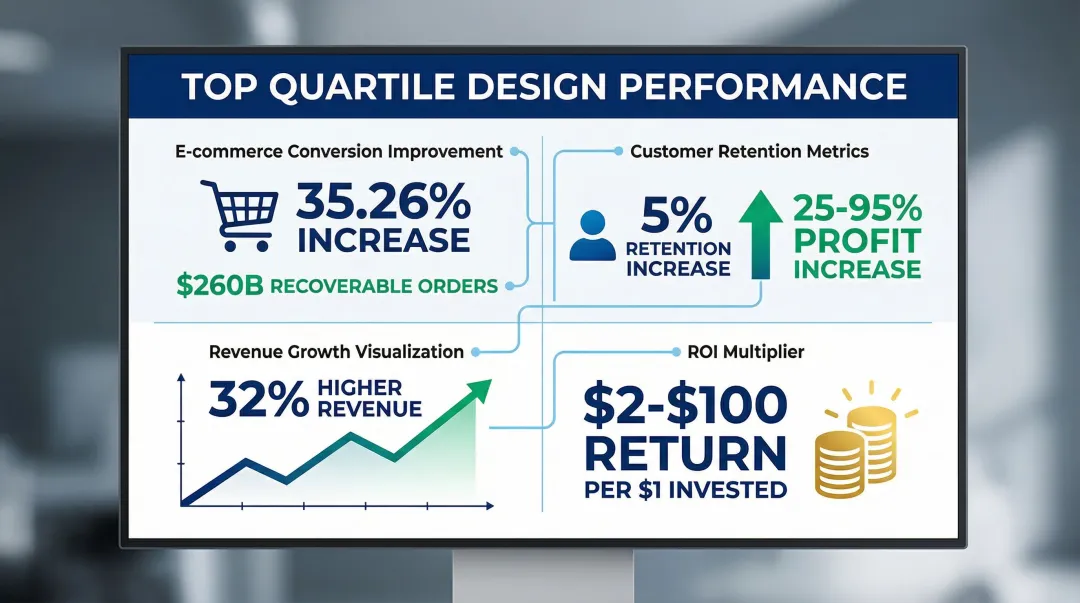

Companies in the top quartile of design performance achieve 32% higher revenue growth and 56% higher total returns to shareholders compared to industry counterparts. Top designers use these statistics to build business cases for UX investment, connecting design improvements to outcomes leadership cares about.

Establishing baselines and measuring impact

Establishing baseline metrics before design changes and measuring impact afterward is what makes UX value concrete. This before-and-after approach demonstrates ROI in terms that finance and leadership can act on: when a conversion rate moves from 2.3% to 3.8% after a redesign, that's $1.2M in additional annual recurring revenue.

Implementing research recommendations for an onboarding flow increased conversion by 18%, generating $1.2 million in additional annual recurring revenue. Another feature prioritization study costing $12,000 prevented a $400,000 investment in a feature that would have seen minimal adoption, a 33x return in one quarter.

Creating business cases for UX work

Strong business cases translate user problems into business language. The structure looks like this:

User problem: "Users can't find product information easily"

Business impact: "Sales team spends 60% of time answering basic questions, reducing capacity for high-value deals by $500K annually"

Design solution: "Improved information architecture and search functionality"

Expected outcome: "Reduce basic inquiry volume by 40%, freeing sales capacity worth $200K annually"

If you're selling into long cycles with multiple stakeholder types — a utility evaluator, an enterprise sustainability director, and a procurement officer all needing to sign off — this framing matters even more. The design decisions that support each of those journeys have measurable impact on deal velocity. What if Design works with climate tech and deep-tech clients to align design decisions with these commercial realities, helping teams identify where UX friction is slowing down pilot approvals, partner conversations, or onboarding timelines.

Quantifying UX impact

The business impact of strong UX is measurable across multiple dimensions:

- Average large e-commerce sites can gain a 35.26% conversion rate increase through better checkout design

- For combined US and EU e-commerce, this translates to $260 billion in recoverable lost orders

- Increasing customer retention by just 5% can lead to 25-95% profit increases

- General UX ROI estimates suggest returns of $2 to $100 for every $1 invested

These figures give you the evidence you need to secure resources, make the case for design investments, and demonstrate measurable value across your organization.

Process: knowing when to break the rules

Understanding rules deeply before breaking them

Expert designers know UX best practices deeply but also understand when context demands departing from those conventions. The key is informed deviation based on evidence and specific circumstances, not arbitrary convention-ignoring.

Microsoft's Bing team tested adding site links to ads, a change that increased vertical space usage and on its surface looked like it would degrade user engagement. The experiment showed it improved revenue by tens of millions of dollars annually with neutral impact on engagement. That outcome was only possible through rigorous testing rather than defaulting to existing performance heuristics. If you're defending an unconventional design choice in an enterprise evaluation, having that kind of test data transforms a potential liability into a differentiator.

Adapting process to project context

This context-driven thinking extends to process methodology itself. Experts adapt their workflow based on project constraints rather than following a one-size-fits-all approach:

Startup MVP: Prioritize rapid validation over comprehensive documentation. Use lean UX methods with quick feedback loops, focus on core user flows, defer edge cases, and accept that launching with "good enough" and iterating is the right call.

Enterprise product: The bar is higher before launch. You need extensive stakeholder alignment and documentation, accessibility compliance and governance requirements, and comprehensive testing across user segments.

Product redesign: Start by understanding why the current design exists before changing it. Measure baseline performance metrics, plan a phased rollout to manage risk, and handle change management carefully with existing users.

Getting this calibration wrong — applying enterprise-grade overhead to an MVP, or shipping an enterprise product with startup-speed process — is one of the fastest ways to erode buyer confidence during a pilot.

Informed rule-breaking

Experts intentionally deviate from conventions when they have evidence it will serve users and business goals better. Valid evidence comes from user research showing your audience has different needs than typical users, A/B testing that demonstrates the unconventional approach performs better, technical constraints that make standard patterns impractical, or strategic differentiation goals that require breaking from category norms.

The deciding factor is clear rationale and data, not personal preference or a desire to do something different. In a buyer conversation, being able to explain that rationale — why your interface breaks from the standard pattern and what evidence supports it — turns a potential objection into a credibility signal.

Communication: presenting ideas without overselling them

Presenting solutions, not preferences

Expert UX designers present design decisions as solutions to specific problems rather than subjective preferences, using data and user insights to build the case. Instead of "I think we should use this layout," experts frame it as: "User testing showed that 8 out of 10 participants completed the task successfully with this layout, compared to 4 out of 10 with the alternative. This directly addresses our goal of reducing checkout abandonment."

This evidence-based approach removes ego from the discussion. When stakeholders disagree, experts point to research findings and business metrics rather than defending aesthetic choices. This same approach applies in buyer presentations: design decisions grounded in research hold up under scrutiny in ways that preference-based choices don't, and that credibility is often what determines whether an evaluation continues.

How to speak each stakeholder's language

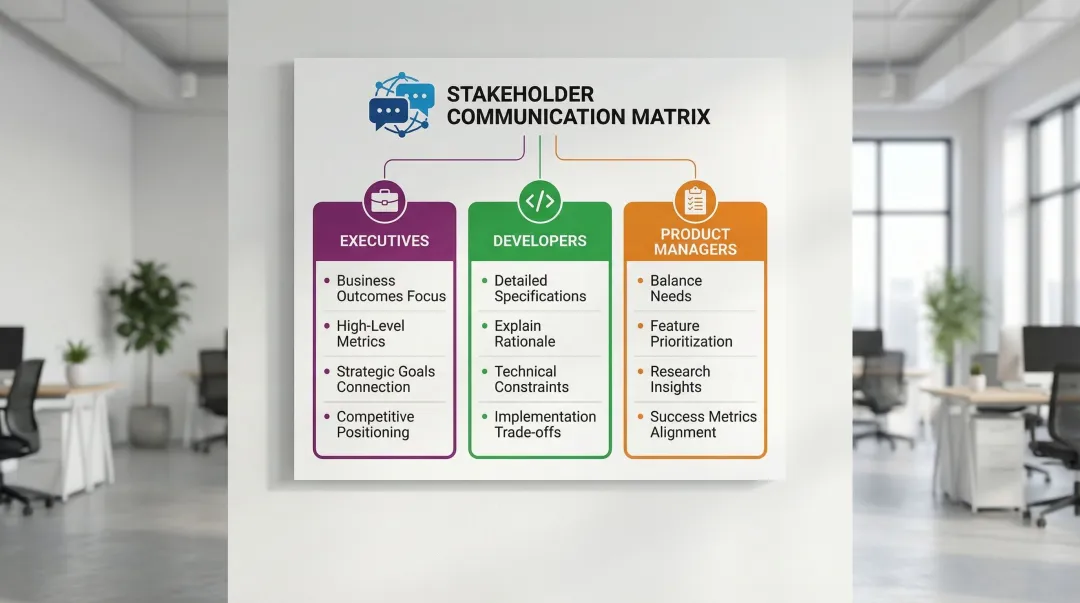

Experts tailor communication to different stakeholders, because what an executive needs to hear is genuinely different from what a developer or product manager needs.

Executives need to hear about business outcomes and ROI — revenue, retention, market share — connected to strategic goals and competitive positioning. Developers need detailed specifications, the reasoning behind design decisions, and room to work through technical constraints and trade-offs together. Product managers sit in between: they need user research findings, feature prioritization rationale, and clear alignment on what success looks like before anything ships.

A deep-tech founder who pitched an onboarding redesign to a CFO as "improved user experience" got it deferred. The same proposal, reframed around a projected reduction in implementation support costs and faster time-to-first-value, was approved in the same meeting. The design work was identical — only the language changed.

Turning criticism into better solutions

Experts handle design criticism by separating ego from work and using objections as an opportunity to refine solutions rather than defend them. When someone says "I don't like this design," experts probe deeper:

- "What specifically concerns you about this approach?"

- "What user need or business goal do you think this doesn't address?"

- "Can you help me understand your perspective?"

This approach transforms subjective criticism into actionable feedback. The real concern usually isn't about the design itself but about an unstated assumption, a misunderstood requirement, or a valid consideration the designer missed. By treating objections as information rather than attacks, experts improve their solutions while building collaborative relationships with the teams they work alongside. The same dynamic plays out in enterprise evaluations: when a buyer pushes back on your UX approach during a pilot, how your team responds to that objection is part of what they're assessing.

Continuous learning: how experts stay sharp

Learning as ongoing practice

Expert UX designers treat learning as an ongoing practice, not a destination. They stay current with evolving tools, methodologies, and industry trends because the field moves quickly. New research methods emerge, tools evolve, user expectations shift, and technologies create new design possibilities. Experts who stop learning quickly fall behind. When you're competing for an enterprise contract against a more established vendor, the product quality gap that comes from outdated methods and stale assumptions is something experienced buyers notice — even when they can't always articulate why.

Continuous learning shows up in daily habits: following industry thought leaders, experimenting with new tools, attending conferences and workshops, and staying embedded in design communities through publications, research papers, and the kinds of conversations that happen at the edge of the field rather than in its center.

Learning from adjacent fields

Experts draw from adjacent fields (psychology, business strategy, data science, accessibility) to bring fresh perspectives to UX challenges. Traditional design disciplines rarely hold all the answers. The most useful insights often come from related domains: psychology surfaces cognitive biases and behavioral patterns that explain why users make the choices they do; business strategy reveals competitive dynamics and business model implications that shape what should be built; data science contributes skills in interpreting analytics and designing experiments; and accessibility research pushes toward inclusive design practices that work across a wider range of abilities.

Cross-disciplinary learning is especially valuable in climate tech, where the domain itself is evolving fast. A UX practitioner who understands how utilities make procurement decisions, or how carbon accounting standards are structured, will ask better research questions and design more usable products than one who treats each project as a generic software problem. That domain fluency shows up directly in how buyers experience your product and your team: when your design decisions reflect an understanding of how procurement works or how regulatory change affects your buyer's operations, you build the kind of credibility that shortens evaluation cycles.

Building learning networks

Experts build learning networks through mentorship, design communities, conferences, and following thought leaders who push the industry forward. Senior practitioners often reach specialist level with 7 or more years of experience and advance by mentoring others and contributing to the community.

Key learning resources include:

- Conferences: CHI (ACM Conference on Human Factors in Computing Systems), Nielsen Norman Group events, UXPA chapters

- Organizations: Nielsen Norman Group, Interaction Design Foundation, local UX meetups

- Communities: Design Declares for sustainability-focused practitioners, specialized Slack groups, LinkedIn communities focused on climate and energy software

- Publications: Industry blogs, research journals, case study platforms, and domain-specific resources like EPRI publications for energy software practitioners or ACEEE reports for efficiency-focused products

For UX practitioners working in climate tech specifically, building fluency in the domain itself (understanding how utilities procure software, how carbon accounting standards work, how grid interconnection timelines affect product roadmaps) is as valuable as keeping up with design methodology. These networks provide exposure to diverse perspectives, early signals of emerging trends, and opportunities to test ideas with peers facing similar challenges. For founders selling into enterprise markets, that accumulated knowledge translates directly into product and conversation quality — the kind of credibility that's hard to manufacture and that experienced buyers consistently reward.

The designers who create the most impact in complex technical domains are not necessarily the ones with the most years of experience. They're the ones who ask better questions, synthesize what they learn into decisions, and make the case for those decisions in terms the whole organization can act on. That's a learnable set of practices, and the ones outlined here are where most of the gap between good and expert actually lives.

If you're building products in climate tech, energy software, or deep-tech and want a clearer read on where your UX is working and where it's creating friction, connect with us.

Frequently asked questions

What's the difference between a good UX designer and a true UX expert?

Experts combine technical skills with strategic thinking and business acumen while maintaining user focus. They shape product strategy, influence business decisions, and mentor other designers, demonstrating mastery across research, design, and communication rather than just execution.

What are the most important skills for UX experts to master?

Problem-solving ranks as the top skill hiring managers prioritize, followed by UI/visual design and collaboration. Core competencies include research methods, strategic thinking, communication, empathy, and understanding business metrics. All of these matter more than technical tool proficiency.

How do UX experts balance user needs with business goals when they conflict?

Experts reframe conflicts as design challenges rather than trade-offs. Instead of choosing sides, they ask "how might we achieve the business goal while serving user needs?" and use data to make the case for solutions that satisfy both constraints.

What tools and resources do UX experts use to stay current?

Design communities (UXPA chapters), industry publications (Nielsen Norman Group, Interaction Design Foundation), conferences (CHI), and thought leaders on LinkedIn. Experts also engage with adjacent fields: business publications, psychology research, and technology trends, to bring diverse perspectives to their work.