You're building a product that no one has built quite like this before. Whether it's a carbon accounting platform, a grid analytics tool, or an energy management system, the users you're designing for exist inside slow-moving industries with complex workflows, multi-user environments, and deep skepticism of early-stage software.

That context changes how you approach UX research. Generic methods and generic references won't get you far when your target user is a utility procurement manager or a sustainability compliance lead who has never seen a product quite like yours.

This is where the literature review becomes more than a box-ticking exercise. Done well, it gives you the research foundation to make faster, better-informed design decisions without spending months in primary research. This guide covers when to use it, exactly how to run it, and the mistakes that quietly derail the process.

TLDR

- Synthesize existing research (academic papers, industry reports, internal studies) to inform design decisions before you run a single interview

- Use during discovery, before redesigns, or when entering a problem space where your team lacks direct experience

- Define research questions, identify sources, organize thematically, then synthesize into concrete recommendations

- Set time limits (5 hours or less for focused reviews) and stop when sources start repeating findings

- Common pitfalls: searching too broadly, summarizing instead of synthesizing, ignoring internal research, failing to connect findings to actual decisions

What is a literature review in UX research?

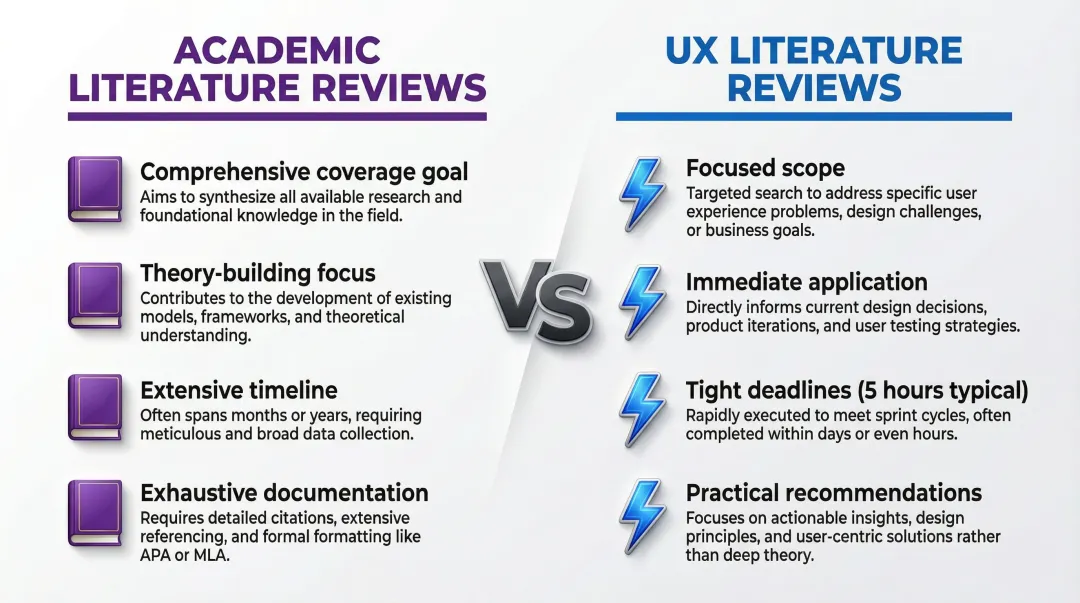

A UX literature review is a systematic examination of existing research, case studies, design patterns, and documented user insights related to a specific design challenge. It's structured, time-bound, and built for immediate application rather than theoretical completeness.

Academic reviews aim for comprehensive coverage to build theory. UX literature reviews are focused and practical, shaped by product timelines and real decisions that need to be made. For climate and deep-tech products, this distinction matters more than usual. The research landscape is thinner, the user populations are specialized, and design patterns from consumer software often don't transfer. A literature review helps you identify what applies, what doesn't, and where you'll need to generate primary data yourself.

Types of UX literature reviews

Two types dominate UX work. Internal research covers past user studies, usability tests, analytics reports, and design documentation within your organization. External research covers academic papers, industry reports, competitor analyses, design pattern libraries, and published case studies.

Both types of research form what Nielsen Norman Group calls "foundational parts of any emerging research project". Starting with secondary research minimizes costs and builds the evidence base you need before investing in primary fieldwork.

The goal is practical application that informs the specific design decisions in front of you.

When should you conduct a literature review in UX?

Literature reviews aren't necessary for every project. They deliver maximum value in specific scenarios, not as default practice.

Ideal scenarios for literature reviews

Literature reviews prove most valuable in three situations:

- Exploring unfamiliar territory. If you're designing for a user segment your team hasn't worked with before (utility operators, compliance managers, industrial maintenance leads), a focused review gives you foundational context before you run a single interview. These reviews can often be completed in 5 hours or less.

- Planning major redesigns or feature launches. Understanding what patterns have been tested in adjacent industries and where they failed saves you from expensive assumptions, especially when your product operates in a regulated or technically constrained environment.

- Justifying design decisions to stakeholders. External research provides objective grounding that holds more weight than intuition, particularly when you're presenting to a technical leadership team or enterprise buyer who expects evidence.

When to skip or minimize literature reviews

Skip literature reviews in these scenarios. For minor UI updates, A/B test variations, or iterative improvements, primary user research delivers more value and time spent reviewing literature could be better invested in direct testing. If you're working against a tight timeline and your team already has fresh, relevant insights from recent primary research, additional literature review will likely offer diminishing returns.

What you need before starting a UX literature review

Before you start pulling sources, three things need to be in place. Without them, you end up with a folder full of papers that don't connect to anything you're actually deciding.

Clear research questions

Define 2-4 specific questions your literature review must answer. "What accessibility patterns work best for voice-based interfaces?" is focused and actionable. "What is good UX?" is too vague to guide meaningful research.

Ensure your questions align with actual project decisions and can realistically influence what gets built. Test question quality by asking: can you imagine finding concrete answers? Are they specific enough to guide keyword searches but broad enough to capture relevant insights?

Once your questions are clear, assess what resources you'll need to answer them.

Access to research sources

Audit what you can access before starting. Check internal research repositories and company documentation first, then look at what database subscriptions your company holds, such as ACM Digital Library or IEEE. Free resources like Google Scholar, Nielsen Norman Group articles, and Medium publications often cover significant ground. Factor in budget constraints before committing time to sources that require paid access.

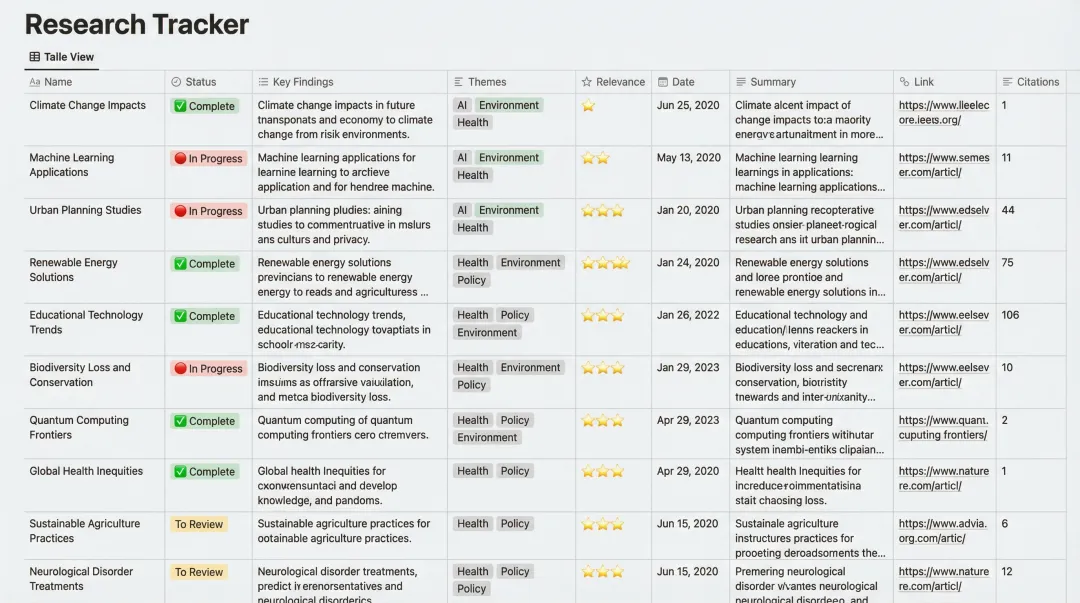

Organization system

Set up a method for tracking sources before you start. Reference management tools like Zotero, Notion, or Airtable work well, as do simple spreadsheets with columns for source, key findings, relevance score, and design implications. Tagging by theme or relevance makes it easier to group sources later. Having this structure in place before you start reading prevents information overload and makes the synthesis step significantly faster.

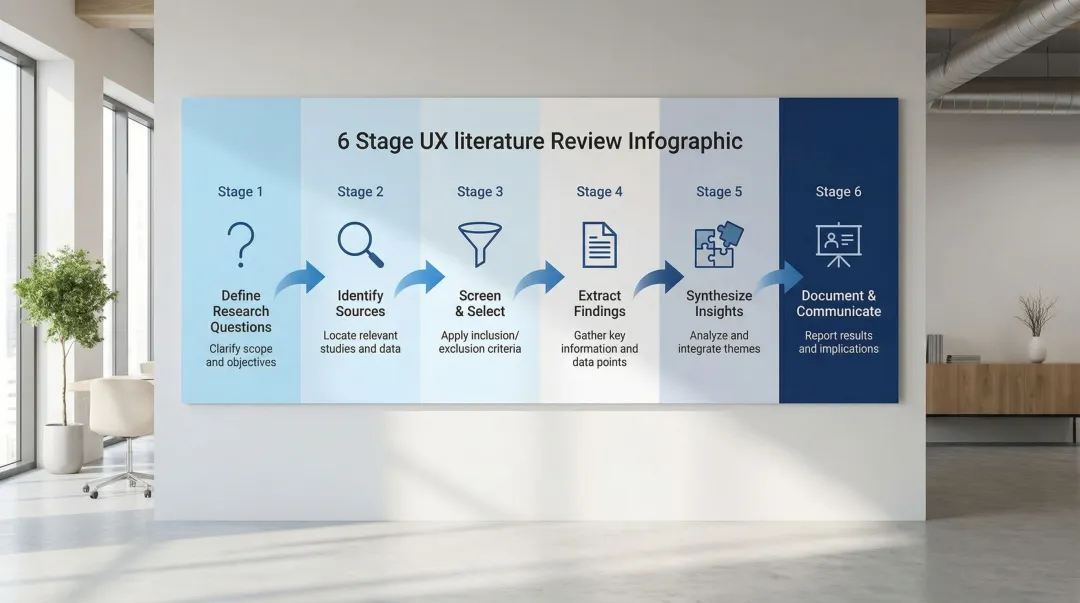

How to conduct a literature review for UX research

Step 1: Define focused research questions

Start by understanding the design problem or decision that needs research support, then translate it into 2-4 specific, answerable questions that will guide your search.

Example transformation:

- No: "How do users navigate complex dashboards?" (too vague)

- Yes: "What usability patterns support data-heavy dashboards for non-technical users in energy management software?" (focused, actionable)

The more specific your question, the more useful your sources will be. If your product sits at the intersection of two industries (say, carbon accounting and enterprise procurement), you may need separate questions for each user context.

Step 2: Identify and access relevant sources

Start with internal sources first. Search your company's research repository, past user studies, usability test reports, and design documentation for relevant findings. This surfaces institutional knowledge and prevents repeating work that's already been done.

External sources to explore:

- Academic databases: Google Scholar, ACM Digital Library, ResearchGate for peer-reviewed HCI studies

- UX industry publications: Nielsen Norman Group, Baymard Institute, Smashing Magazine for applied research

- Design pattern libraries: Material Design, Apple Human Interface Guidelines, and domain-specific repositories for documented solutions

- Industry-specific sources: For climate and deep-tech products, look beyond traditional UX publications. Energy industry journals, EPRI research, utility operator training documentation, and sustainability reporting standards (GRI, TCFD) can surface real user mental models and workflow constraints that standard UX studies haven't captured.

Search strategically using specific keywords from your research questions plus terms like "usability study," "user research," "design patterns," "case study," "UX evaluation."

Evaluate source credibility:

- Peer-reviewed papers: High credibility for methodology, though check whether the study population resembles your actual users

- Established UX firms and consultancies (Nielsen Norman Group, Baymard Institute): Strong practical credibility, particularly for well-documented interaction patterns

- Blog posts and Medium articles: Treat as directional, not definitive; verify any specific claims with primary or peer-reviewed sources

- Internal research: Highest contextual relevance, but confirm the methodology is sound and the findings haven't been superseded by product changes

Step 3: Screen and select sources efficiently

Don't read everything fully at first. Use abstracts, executive summaries, and introductions to determine relevance before committing time to a full read.

Apply inclusion criteria:

- Does this source address your research questions?

- Is the research methodology sound?

- Is it recent enough to be relevant? For UX patterns, generally aim for research within the past 5-10 years. For climate and energy-adjacent products, be especially careful with recency: user expectations and technical capabilities in this space have shifted significantly, and a study on utility software usability from 2012 may not reflect how operators work today.

- Does it apply to your user context? Research on consumer-facing interfaces may not transfer to enterprise tools used by technical specialists.

Create a quick relevance scoring system (High/Medium/Low) to prioritize reading order. Aim for 15-25 high-quality sources rather than 100 superficial ones; depth matters more than quantity in UX literature reviews.

Step 4: Extract and organize key findings

As you read each source, take structured notes capturing:

- Research question addressed

- Methodology used

- Key findings relevant to your project

- Limitations or context that might affect applicability

- Potential design implications

Organize findings thematically (by user need, design pattern, problem type) rather than source-by-source to enable synthesis. Use spreadsheets with theme columns, affinity diagrams (digital or physical), or mind maps to show relationships between findings.

Flag contradictory findings, because they often reveal important nuances or context-dependent factors worth investigating. In climate tech products specifically, you'll frequently encounter research that holds true for consumer software but breaks down in enterprise or industrial contexts. Those contradictions are data, not noise.

Step 5: Synthesize insights across sources

Move beyond summarizing individual studies to identifying patterns, themes, and relationships across multiple sources. Synthesis is the step most teams skip, and it's where the actual design value lives.

Look for convergent evidence. Findings that appear across multiple studies strengthen confidence in applying them to your design. Research saturation principles apply here: stop when new sources stop yielding new themes.

Identify gaps. What questions remain unanswered? What contexts haven't been studied? These gaps may justify primary research, which is particularly common in climate tech where the user populations are niche and understudied.

Create synthesis statements that combine insights across sources. For example: "Three studies of data-heavy enterprise dashboards found that progressive disclosure of technical details reduced cognitive load for non-expert users, though the optimal level of detail varied significantly depending on whether users had an operational or analytical role."

The example you choose should map to your actual product context. Generic synthesis from e-commerce doesn't build design confidence when your product is a carbon emissions monitoring platform.

Connect findings to your specific design context: how do these insights apply to your product, your users, and your constraints?

Step 6: Document and communicate findings

Create a deliverable appropriate to your audience. Research teams typically need a detailed report; stakeholders want an executive summary; designers benefit from an annotated design pattern library; and product managers usually work best with a focused research brief.

Structure your documentation:

- Research questions

- Methodology (search strategy, sources included)

- Key themes with supporting evidence

- Design implications and recommendations

- Gaps requiring primary research

Include enough source citations that others can verify findings or go deeper, but prioritize readability over academic citation style. When presenting to stakeholders, connect findings directly to design decisions: "Based on five studies of form design for non-technical enterprise users, we recommend..." rather than the vague "research shows..."

Common mistakes when conducting UX literature reviews

Avoid these pitfalls that undermine literature review effectiveness:

Starting with poor scope definition:

Searching without clear research questions leads to information overload and wasted time. Always define focused questions before you open a browser, and start narrow before expanding. For climate tech products, this is especially important because adjacent research from utilities, industrial operations, and sustainability software can pull you in many directions that aren't relevant to your specific user.

Mishandling source material:

Summarizing instead of synthesizing creates lists rather than insights. Look for patterns, contradictions, and relationships across sources rather than just cataloguing what each paper says. Accepting findings uncritically without evaluating methodology, sample size, or context leads to misapplied insights, and ignoring internal research wastes past investment while risking a repeat of previous mistakes.

Failing to make findings actionable:

Every insight should point to a concrete design direction. If your literature review surfaces research on how non-technical users interpret data visualizations, that finding should translate directly into decisions about your dashboard hierarchy, not just sit in a findings document.

Nielsen Norman Group's guidance on actionable usability findings consistently points to this gap: research that doesn't connect to decisions gets ignored, which is a costly outcome when your primary research cycles are long and your next user access window may be months away.

Perfectionism over pragmatism:

Set time limits based on what the decision actually requires. Prioritize depth in areas directly relevant to your research questions, rather than trying to achieve exhaustive coverage. Research saturation, not completeness, is the target.

Tools and resources for UX literature reviews

Research databases and sources

Academic:

- Google Scholar — Free, broad coverage across disciplines

- ACM Digital Library — HCI and computing research (subscription required)

- ResearchGate — Researcher profiles and papers, some free access

UX industry:

- Nielsen Norman Group — Articles and reports on UX best practices

- Baymard Institute — E-commerce UX research based on extensive testing

- UX Collective and Smashing Magazine — Curated articles and case studies

Design patterns:

- Material Design Guidelines

- Apple Human Interface Guidelines

- Pattern libraries like UI Patterns and Mobile Patterns

Industry-specific (for climate and deep-tech products):

- EPRI (Electric Power Research Institute) — Research on utility and energy systems

- GRI and TCFD documentation — Sustainability reporting standards that reveal how enterprise users think about data

- Industry operator training materials — Often the closest proxy for real user mental models in regulated industries

Organization and reference management

Zotero: Free reference manager with browser extension for saving sources, automatic citation formatting, and PDF organization.

Notion or Airtable: Flexible databases for organizing findings with tags, links, and custom fields that enable filtering and cross-referencing.

Miro or FigJam: Visual organization with affinity diagramming and mind mapping capabilities for collaborative synthesis.

Simple spreadsheets work well for smaller reviews. Use columns for: Source, Key finding, Theme, Relevance, Design implication.

Once you've chosen your organization approach, don't overlook valuable research already conducted within your organization.

At What if Design, our UX research process for climate and deep-tech products starts with exactly this step. Before running any interviews, we map what's already known, what's been tested, and where the genuine gaps are. It shortens the path to confident design decisions and keeps primary research focused on questions that can't be answered any other way.

Finding internal research

Start your internal search by querying research repositories like Dovetail, Confluence, or SharePoint using keywords, tags, or project names. Talk to research operations teams or long-tenured researchers who know what studies exist. And review past project documentation, design specs, and product requirement documents — these often contain embedded research references that don't surface in formal searches.

A well-run literature review changes how you enter a problem

Instead of building on assumptions and hoping they hold, you build on what's already been tested and documented. For teams working on complex climate and deep-tech products, where primary research access is limited and sales cycles are long, that foundation makes a meaningful difference. Your primary research becomes more targeted, your design decisions carry more weight with stakeholders, and your team spends less time relitigating questions that have already been answered elsewhere.

If you're working on a complex product in climate tech, energy software, or deep-tech and want a research process that accounts for the specific constraints of your industry, connect with us.

Frequently asked questions

How long should a UX literature review take?

Focused reviews addressing specific design questions can take as little as 5 hours, while more comprehensive reviews of entirely new problem spaces may require 1-4 weeks of work. Adjust based on your project timelines, the maturity of the research landscape in your domain, and how much is already known internally.

Do I need access to academic journals to conduct a good literature review?

Not necessarily. Many valuable UX insights come from industry publications, design blogs, and internal research. Academic access helps, particularly for HCI-specific research, but it isn't required for actionable findings in most UX contexts. For climate and deep-tech products, industry-specific sources often provide more relevant context than academic papers anyway.

How do I know when I've reviewed enough sources?

Stop when you reach saturation: new sources are repeating findings you've already captured, your research questions are answered with converging evidence, or you've identified clear gaps that only primary research can fill.

Should I include competitor research in my literature review?

Yes, if relevant. Analyzing competitor solutions and published case studies provides practical examples of what's been tried and what the market has validated. Treat them critically, since you won't know their full research methodology or how they measure success.

How do I present literature review findings to stakeholders who want "just the highlights"?

Create a one-page executive summary with key themes, design recommendations, and evidence strength. Keep detailed findings in an appendix for those who want to go deeper. For technical stakeholders at climate or deep-tech companies, grounding recommendations in evidence is often more persuasive than visual presentation alone.

What if my literature review contradicts what stakeholders want to do?

Present the contradictory evidence objectively, explain the context and limitations of the research, and acknowledge that your specific situation may differ. Recommend small-scale primary research to validate before full commitment. This is a common situation in deep-tech products where the technology context doesn't map neatly to existing research.