UX research as innovation catalyst in R&D

Your R&D team has built something technically impressive. The chemistry works. The system performs in controlled conditions. But the utility operators who would use it daily find the interface confusing, and the enterprise buyers evaluating it can't map the value to their procurement language. The technology is sound. The commercial path is unclear.

This is the gap UX research is designed to close — not as a late-stage polish exercise, but as an early-stage validation tool that determines whether an innovation reaches market at all.

For climate tech and deep tech companies moving from scientific proof to commercial product, this gap is especially costly. Design changes made after the concept phase cost 13 times more in aerospace engineering contexts than those addressed during early discovery. Across broader product development, that multiplier reaches 100 times more post-release.

This post breaks down how R&D-stage UX research works, which methods apply at which stages, and how climate tech and deep tech teams can build this capability without slowing down.

TLDR:

- Validates assumptions early to reduce innovation risk before costly development begins

- Late-stage design changes cost 13-100x more than early-phase corrections

- Ethnography for discovery and rapid prototype testing drive validation

- Lean methods and agency partnerships make research accessible to small teams

- Organizations see 415% returns with payback periods under six months

Understanding UX research in the R&D context

What makes R&D UX research different

R&D UX research differs from traditional product research in a fundamental way: while product teams optimize what already exists, R&D researchers are working to validate whether a concept should be built at all. They need to confirm that a breakthrough idea solves a real problem before significant engineering resources are committed to building it.

This research has to answer two questions simultaneously: does the technology work, and will the people who need to use it actually be able to use it effectively? A battery management system might perform flawlessly in laboratory conditions. But if utility operators find the interface confusing or enterprise buyers can't understand the value proposition, the innovation fails commercially regardless of its technical merit.

R&D UX research applications include emerging technologies like hydrogen production systems or carbon marketplaces, proof-of-concepts testing novel approaches to energy storage or industrial processes, innovation sprints exploring future scenarios for climate solutions, and blue-sky projects investigating unmet needs in sustainability sectors.

The R&D UX research spectrum

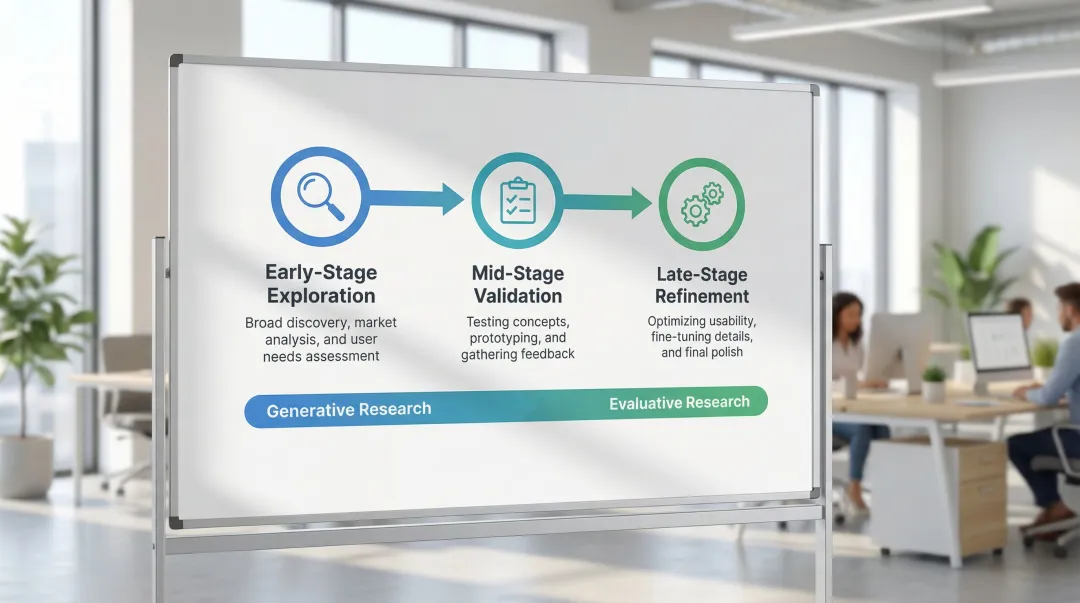

Research in R&D exists on a spectrum from generative to evaluative, each serving distinct purposes throughout the innovation lifecycle.

Generative research uncovers problems and opportunities. Early in R&D, teams need to understand: what challenges do users face? What workflows exist today? What unmet needs could fuel innovation? Ethnography, contextual inquiry, and diary studies reveal insights that define the problem space.

Evaluative research tests solutions and prototypes as concepts mature. Teams validate whether solutions work, users understand them, and adoption is likely. Usability testing, concept validation, and Wizard of Oz testing assess whether innovations deliver on their promise.

Research focus shifts throughout the R&D lifecycle. Early-stage exploration focuses on broad discovery of user needs, pain points, and opportunity areas. Mid-stage validation tests specific concepts and prototypes with target users. Late-stage refinement optimizes usability and prepares for market launch.

Each stage requires balancing rigor with speed — rapid iteration is what determines R&D success in practice. The "5-user rule" demonstrates this balance: testing with just 5 users uncovers approximately 85% of usability problems, enabling quick, budget-friendly iteration cycles.

Why UX research is critical for R&D innovation

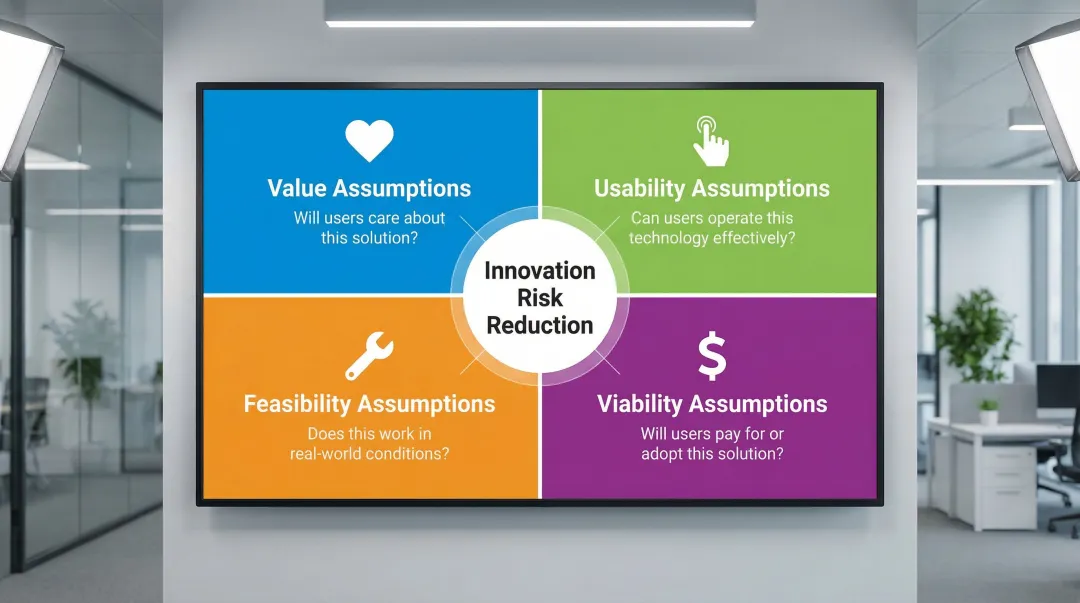

Reducing innovation risk through user insights

UX research reduces innovation risk by testing specific assumptions before they become expensive engineering decisions — things like whether industrial operators can interpret your data visualization, or whether a procurement team can articulate your ROI within their internal approval process.

The financial impact is substantial: aerospace sector data shows that making design changes after concept freeze costs 13 times more than early-stage modifications.

Framework for identifying critical assumptions to test:

- Value assumptions: Will users care about this solution?

- Usability assumptions: Can users operate this technology effectively?

- Feasibility assumptions: Does this work in real-world conditions?

- Viability assumptions: Will users pay for or adopt this solution?

When applying this framework, prioritize assumptions with the highest risk and greatest impact on project success. For a carbon capture platform, validating that industrial buyers understand ROI calculations matters more than perfecting dashboard aesthetics.

Accelerating time-to-market

UX research speeds up the overall development cycle by preventing the kind of expensive pivots that happen when teams build in the wrong direction.

Teams that skip research often invest months of engineering effort into features users don't actually want or can't use effectively. By the time this becomes clear, the cost of reversing course is significant. Research-driven teams identify the right problems earlier, which means engineering time goes toward solutions that hold up when tested with real users.

The concept of "failing fast" through rapid user testing transforms how R&D operates. Testing rough prototypes with 5 users in a week reveals fundamental flaws before months of engineering effort are invested.

Running three small studies with 5 users each — rather than one large 15-user study — lets teams fix problems and re-test at each stage, maximizing learning per week rather than per quarter.

How research uncovers user-centered innovation

UX research surfaces the specific friction points and workflow gaps that users live with daily — the ones that don't show up in product briefs because users have normalized working around them.

Users often can't articulate what they need, especially for technologies that don't yet exist. Research methods create the conditions to reveal these hidden patterns.

Methods for identifying innovation opportunities:

- Observe users struggling with current workflows to spot inefficiencies

- Conduct contextual inquiry to understand reasoning and mental models

- Use diary studies to capture longitudinal patterns in complex environments

- Interview stakeholders across the adoption chain from regulators to end customers

Climate tech companies using this approach have seen meaningful results. Through usability testing with utility partners and enterprise buyers, teams uncover specific pain points in energy management platforms — like confusing data visualizations or unclear ROI metrics — that become the foundation for genuinely differentiated solutions rather than incremental improvements.

Building internal stakeholder confidence

Beyond revealing user insights, research evidence builds internal buy-in for innovative concepts. When R&D teams present user insights alongside technical specifications, stakeholders see both feasibility and market demand. This dual validation is crucial for securing funding and resources.

User insights help align cross-functional teams around shared understanding. When engineers, product managers, and business leaders see the same user struggles, debates shift from opinions to evidence — a significant shift in how decisions get made and how fast they move.

The competitive advantage of research-driven innovation

Organizations that integrate UX research into R&D build more differentiated products. User insights lead to solutions addressing real pain points that competitors miss. According to the McKinsey Design Index, top-quartile design performers achieve 32 percentage points higher revenue growth and 56 percentage points higher shareholder returns than industry peers.

Deep user understanding creates this competitive advantage. Companies that research how utility operators actually use energy dashboards, how enterprise buyers evaluate carbon platforms, or how technicians maintain hydrogen systems build products competitors cannot easily replicate — because the insight behind the design isn't visible from the outside.

Essential UX research methods for R&D teams

Generative research methods for innovation discovery

R&D teams working on breakthrough technologies face a specific challenge: understanding user needs for products that don't yet exist. Ethnographic research and contextual inquiry address this by observing users in their actual environments, uncovering complex workflows and unmet needs that standard interviews rarely reveal.

Contextual inquiry uncovers details like reasoning, motivation, and mental models that users omit in standard interviews. For specialized contexts — observing operations on an oil tanker or inside a carbon capture facility — field research provides information that no amount of remote interviewing can replicate.

Diary studies capture longitudinal usage patterns by having participants log activities and interactions over time. This method is particularly useful for understanding how a new technology would fit into daily routines or complex multi-step workflows.

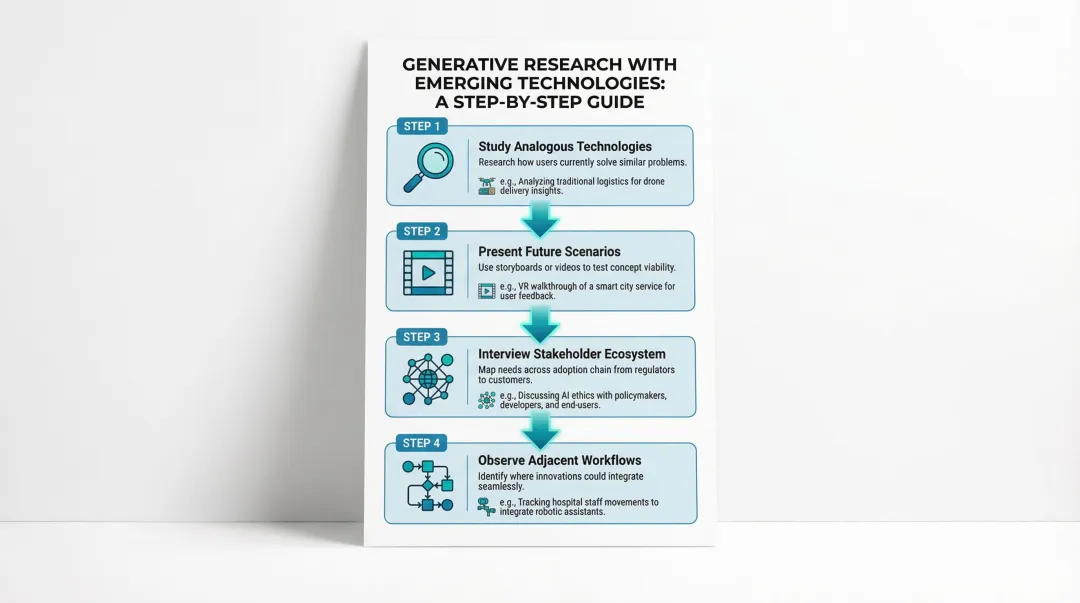

For emerging technologies without direct precedents, R&D teams need adapted approaches:

Conducting generative research with emerging technologies:

- Study analogous technologies to understand how users currently solve similar problems

- Present future scenarios through storyboards or videos to test concept viability

- Interview stakeholders across the adoption chain to map ecosystem needs

- Observe adjacent workflows where innovations could integrate seamlessly

Evaluative research methods for concept validation

Prototype and concept testing validates value propositions and usability before expensive engineering begins. Successful R&D teams share early prototypes with outsiders rather than perfecting them internally, which builds a culture of rapid validation rather than internal consensus-seeking.

Wizard of Oz testing simulates technology functionality manually, allowing teams to test user reactions before building complex systems. For an AI-powered energy optimization platform, researchers manually provide recommendations to test whether users trust and act on the guidance — validating the core value proposition before investing in the underlying algorithms.

R&D contexts require adapted usability approaches: test incomplete prototypes by focusing on core interactions rather than polish, use think-aloud protocols to surface user mental models, run comparative tests between concept variations, and prioritize the critical tasks that determine adoption success.

Rapid research techniques for agile R&D

When speed determines competitive advantage, lean research methods deliver directional insights without sacrificing decision quality.

Guerrilla testing involves quick, informal usability tests with readily available participants. Testing a prototype at an industry conference or even a coffee shop with relevant professionals can surface fundamental usability problems in hours rather than weeks.

Remote unmoderated studies allow participants to complete tasks independently while software records their interactions. This method scales efficiently and works across geographies, which matters when your target users are spread across utilities in different regions.

Rapid surveys gather quantitative data quickly to validate assumptions or prioritize features. Keeping surveys focused — 5-7 questions maximum — maintains response rates and keeps the data actionable.

The 80/20 rule in UX research

Focus on methods that provide maximum insight with minimum time investment. Nielsen Norman Group research shows that 20% of product areas cause 80% of user frustration. Targeting research on these high-friction areas produces the highest return on research time.

Choosing between rapid and rigorous methods depends on the stakes involved. Rapid methods produce directional insights and quick validation; rigorous methods support high-stakes decisions affecting significant investment. A hybrid approach uses rapid methods for iteration cycles and rigorous methods for major milestones.

Mixed methods approach

Combining qualitative and quantitative research provides a more complete picture. Qualitative methods reveal why users behave certain ways; quantitative methods show how many users experience the same issues — which is what stakeholders need to prioritize fixes.

Triangulating insights from multiple methods

Surveys identify common pain points, then interviews uncover root causes. Usability testing finds problems, then analytics measure their actual impact. Ethnography enables discovery, then prototype testing validates specific concepts. Diary studies provide qualitative context, then usage metrics reveal quantitative patterns.

Framework for selecting research mix

Project stage determines methodology balance. Early exploration requires more qualitative research; later validation requires quantitative confirmation before committing engineering resources.

Timeline constraints shape method selection. Tight deadlines favor rapid approaches; strategic decisions affecting significant investment justify deeper investigation.

Resource availability guides scope. Limited budgets prioritize high-impact methods; larger budgets enable comprehensive multi-method approaches across the full adoption chain.

Building UX research capabilities in R&D organizations

Structuring UX research in R&D teams

Organizations structure research teams through three primary models, each with distinct advantages.

Centralized research teams have researchers reporting to a central manager, promoting consistency and strategic alignment. Organizations needing standardized methods across multiple R&D initiatives benefit most from this approach.

Embedded researchers dedicate to specific product teams, developing deep domain knowledge and faster iteration cycles. R&D teams working on long-term, complex innovations see the greatest value here.

Hybrid approaches balance strategic oversight with tactical execution. Researchers report centrally but deploy to product teams, combining consistency with responsiveness.

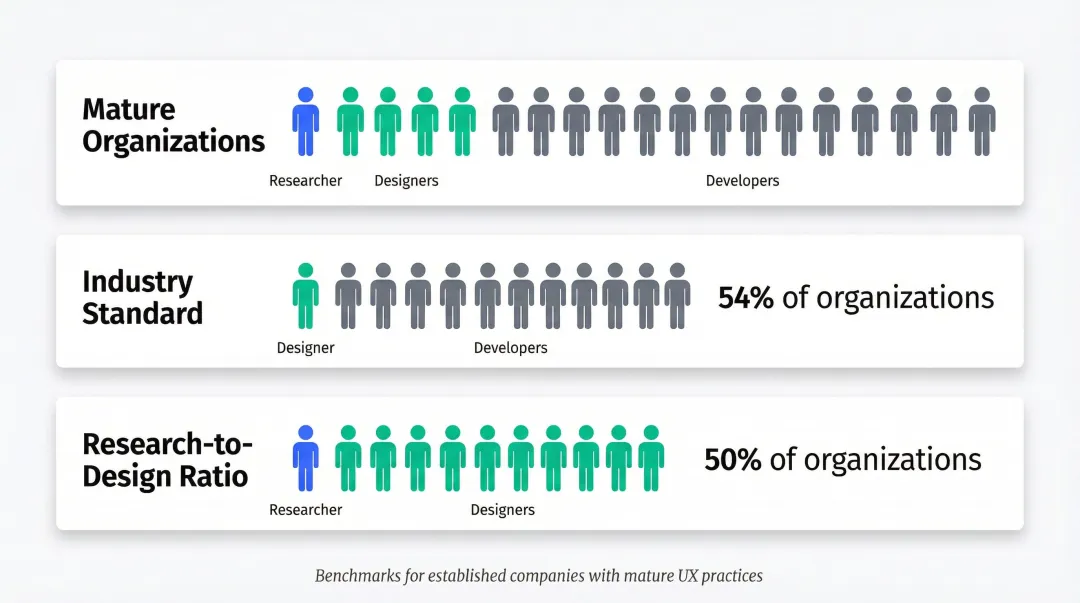

Staffing ratios: Industry benchmarks suggest 1 researcher : 5 designers : 50 developers in mature organizations. Half of organizations maintain at least 1 designer for every 10 developers, while 54% have 1 researcher for every 10 designers.

These ratios are a useful benchmark for mature organizations, but they rarely translate to early-stage teams or startups where building a full internal research function isn't realistic.

For teams that can't yet support a full internal research function, partnering with an external design agency that specializes in climate tech and deep tech can fill the gap. What if Design works with early-stage and growth-stage climate tech companies on UX research projects — from user interviews and usability testing to synthesis and recommendations — at a fraction of the cost of an in-house senior hire. The advantage of working with a domain-familiar partner is that there's no time lost explaining the technology context, which matters when R&D timelines are tight.

Democratizing research across R&D

Research democratization enables non-researchers to conduct basic studies. Organizations scale insights without growing research teams proportionally.

The goal is to equip product managers, engineers, and designers with enough foundational research skills to run basic studies independently, without waiting for a dedicated researcher.

Creating research toolkits and templates:

- Develop interview guides with pre-written questions for common scenarios

- Create usability test scripts that non-researchers can follow

- Build survey templates for frequent research needs

- Provide synthesis frameworks for organizing findings

Research champions and training programs embed research skills across R&D teams. Identify team members who are already drawn to user insights, provide training on basic methods, and support them as they conduct their first studies. You build research culture while generating findings that feed directly into active decisions.

Building a research repository

Centralizing research insights prevents knowledge loss. Effective repositories require governance to manage taxonomy, metadata, and access across R&D organizations.

Tools and systems for organizing research:

- Research platforms like Dovetail for tagging and searching insights

- Shared drives with clear folder structures and naming conventions

- Wiki systems documenting key findings and recommendations

- Integration with project management tools to link insights to active decisions

Making research actionable: Organize insights by user type, pain point, or product area — not chronologically. Create summary documents that highlight findings relevant to current decisions rather than asking stakeholders to read full research reports.

Fostering a research culture

Embedding research thinking into R&D processes requires consistent effort and leadership support.

Strategies for building research culture:

- Include user insights in all major decision reviews

- Celebrate examples where research prevented costly mistakes

- Make research findings visible through dashboards and regular presentations

- Require evidence for assumptions in project proposals

Getting leadership buy-in: Demonstrate research value through pilot projects that show clear outcomes. Start with high-visibility initiatives where user insights can prevent obvious problems. Expand as credibility builds across the organization.

Demonstrating value through quick wins: Conduct rapid studies that address immediate questions. Deliver insights within days and track the decisions they inform. Quick wins build momentum and give skeptical stakeholders something concrete to evaluate.

Overcoming common challenges in R&D UX research

Researching emerging technologies users haven't experienced

When users lack reference points for an innovation, analogous research becomes your primary tool — studying how they interact with similar or adjacent technologies gives you the behavioral baseline you need. Pair this with future scenario testing through storyboards or short videos showing the technology in context.

The key is focusing on current pain points and workflows rather than asking users to imagine or predict their behavior with something unfamiliar. Users are poor predictors of their own future behavior; they're much more reliable observers of their current frustrations.

Balancing research rigor with speed demands

Knowing when "directionally sufficient" is enough is a skill in itself. For decisions made during rapid iteration, 5-user tests provide adequate signal to move forward. Reserve comprehensive studies for high-stakes decisions that affect significant investment or lock in architectural choices.

Running multiple small studies rather than one large study maintains learning velocity across the sprint cycle and allows you to validate fixes before committing to the next stage.

Recruiting participants for specialized R&D projects

Creative recruitment strategies for hard-to-reach technical users include:

- Using industry conferences and trade shows as recruitment opportunities

- Partnering with industry associations for access to specialized audiences

- Offering higher incentives for hard-to-reach participants

- Using snowball sampling where participants refer colleagues in their network

- Engaging with online communities and forums in specialized technical domains

Measuring impact: UX research metrics for R&D

For R&D teams, research impact often feels intangible until you translate findings into business metrics. The key is tracking outcomes that matter to stakeholders and the project bottom line.

Key metrics for R&D research include time saved in development (tracking projects where research prevented rework), reduction in late-stage changes (measuring design modifications after development begins), and innovation success rate (comparing outcomes for researched versus non-researched initiatives).

Qualitative measures of impact matter equally. Survey stakeholders on decision confidence when research informs choices. Assess cross-functional agreement on user needs and priorities. Track whether research-informed decisions lead to better downstream outcomes. Together, these qualitative indicators complement quantitative data to build a complete picture of research value.

Building a business case for research investment

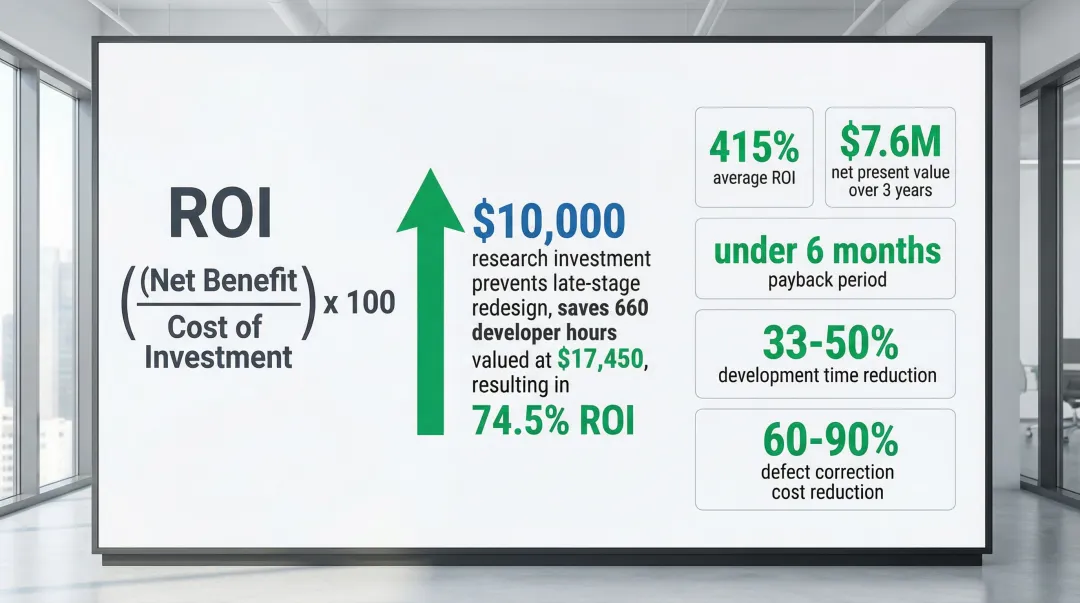

Calculate ROI using this straightforward framework from User Interviews: (Net Benefit / Cost of Investment) x 100

Example calculation: A research project costing $10,000 that prevents a late-stage redesign saving 660 developer hours (valued at $17,450) yields 74.5% ROI.

Industry benchmarks strengthen the business case further. According to User Interviews' ROI analysis, organizations investing in usability testing realize 415% ROI with net present value of $7.6 million over three years.

Payback periods typically fall under six months. Usability engineering can reduce development time by 33-50% and cut defect correction costs by 60-90%.

R&D teams that build UX research into their process early — not as a checklist item, but as a genuine validation discipline — reach commercial-ready products faster and with fewer expensive course corrections. The methods don't require large budgets or dedicated research teams. They require asking the right questions before committing engineering resources to the wrong answers.

If your team is working on emerging climate or deep tech and UX research isn't part of your current process, it's worth examining where that gap shows up. The most common signal is late-stage design changes or features that work technically but fail to drive adoption.

Frequently asked questions

What is UX research?

UX research systematically studies user behaviors, needs, and motivations through observation, surveys, and testing. It discovers the specific problems worth solving and evaluates whether proposed solutions actually work in practice.

How does UX research differ in R&D versus product development?

R&D research explores and validates new concepts using prototypes and emerging technologies, often before the technology itself is complete. Product development research optimizes existing solutions and refines features users already interact with regularly.

What are the most effective UX research methods for innovation projects?

Use generative methods (ethnography, contextual inquiry) early to discover real opportunities, then evaluative methods (prototype testing, usability studies) to validate specific concepts. The 5-user rule enables rapid iteration with minimal resources, making it practical even for teams without a dedicated researcher.

How can small R&D teams implement UX research with limited resources?

Lean approaches include rapid testing methods, research democratization through toolkits and training, and partnering with external design agencies for specialized needs. External partners with domain familiarity in climate and deep tech provide research capabilities at a fraction of the cost of building an in-house team, with faster ramp-up because the technology context doesn't need to be explained from scratch.

What is the ROI of UX research in R&D?

Organizations investing in usability testing report 415% ROI with net present value of $7.6 million over three years, with payback periods typically under six months. The compounding benefit is that problems caught early are dramatically cheaper to fix — both in engineering time and in the downstream cost of user abandonment.