Why qualitative testing is the foundation of user-centric product design

You've built a product that works. Your pilots are showing early results. Users are completing tasks. But something isn't clicking at scale, and the analytics don't tell you why.

For climate tech and deep tech product teams, this is a familiar tension. Conversion rates, task completion times, and usage dashboards show you what users do. They don't show you whether users abandoned a flow because the interface confused them, because they didn't trust the data, or because the onboarding never matched how they actually work. A 60% task completion rate is a signal, not an answer.

This is where qualitative testing earns its place in the product development cycle. It surfaces the motivations, mental models, and friction points that quantitative data can't capture. For products operating in technically complex, trust-sensitive categories like energy management software or carbon accounting platforms, understanding the "why" behind user behavior isn't optional. It's what separates products that get adopted from products that stall at the pilot stage.

This guide covers the core qualitative testing methods, when to use them, and how to run them effectively across your product lifecycle.

TLDR: Key takeaways

- Qualitative research uncovers user motivations and friction points that usage metrics miss

- Testing with just 5 users uncovers approximately 85% of usability issues

- Core methods: user interviews, usability testing, contextual inquiry, diary studies

- Delivers the highest ROI during early discovery, before development costs compound

- Pairs with quantitative data to answer both "what" users do and "why" they do it

What is qualitative testing in product design?

Qualitative testing is a research approach that collects non-numerical, subjective data about user experiences, behaviors, and motivations.

Unlike quantitative testing, which measures how many users complete a task or how long it takes, qualitative research explores the why and how behind user actions.

A widely cited example: in 2012, Lego used extensive qualitative concept testing with young girls and discovered that their target audience preferred building entire environments over single structures, and paid significantly more attention to interior layouts. That insight, which their sales data had never surfaced, led to the launch of Lego Friends. According to reporting on the product line's market impact, the value of construction toys for girls grew from approximately $300 million to $900 million between 2011 and 2014. The behavioral nuance that drove that outcome was only visible through qualitative research.

Types of data collected include:

- Direct records of user struggles, such as difficulty finding navigation controls or misinterpreting data labels

- Real-time spoken feedback that reveals how users process what they're seeing as they interact with the product

- Attitudinal insights into users' motivations, hesitations, and unmet needs

- Environmental factors affecting use, including workflow interruptions, physical constraints, and existing tool integrations

The goal is to understand how real users think, where they get stuck, and what would need to change for the product to work better for them.

According to the Nielsen Norman Group, qualitative data consists of "observational findings that identify design features easy or hard to use." This allows researchers to infer problematic aspects and determine design quality through direct observation.

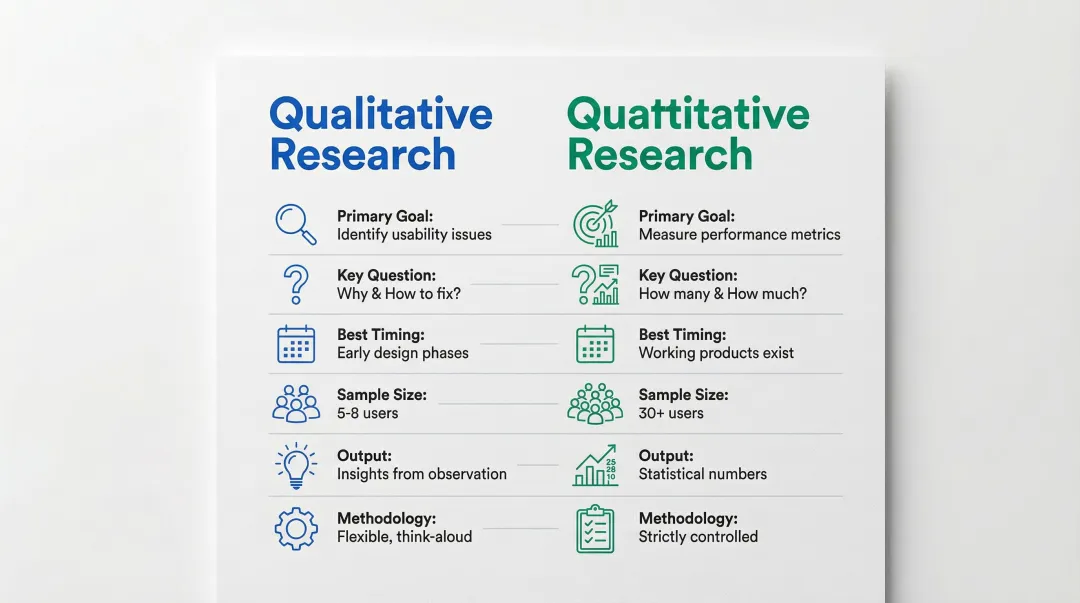

Qualitative vs quantitative testing: understanding the difference

The choice between qualitative and quantitative methods depends on what questions you need answered and when you're asking them.

| Feature | Qualitative research | Quantitative research |

|---|---|---|

| Primary goal | Identify usability issues and solutions | Measure usability metrics and track performance |

| Key question | "Why?" and "How to fix?" | "How many?" and "How much?" |

| Best timing | Early design phases and redesigns | When working products exist |

| Sample size | 5-8 users per iteration | 30+ users for statistical significance |

| Output | Insights based on observation | Statistically meaningful numbers |

| Methodology | Flexible, think-aloud protocol | Strictly controlled, no assistance |

When to use each method

Qualitative testing excels at revealing the root causes of problems. If users abandon a checkout flow or disengage from an onboarding sequence, qualitative research identifies whether they're confused by the interface, distrustful of the system, or missing functionality they expected to find.

Quantitative testing then confirms how widespread that problem is across your full user base.

The most effective approach uses both. UX research demonstrates that relying solely on quantitative data can be "too narrow to be fruitful and oftentimes directly misleading" because it focuses strictly on the "what" rather than the "why," which can lead teams to optimize the wrong variables entirely. If you're at the pilot stage and optimizing for task completion rate when the real problem is trust in the underlying data, you arrive at your commercial review with metrics that don't address what the buyer is actually evaluating.

The 5-user guideline

Jakob Nielsen's foundational research demonstrates that testing with 5 users reveals approximately 85% of usability problems. This is a guideline for iterative design, not a one-time study. The most efficient approach runs three separate rounds with 5 users each: find problems, fix them, test again to surface what's next. For a resource-constrained team preparing for enterprise evaluation, that structure means you can validate your core onboarding flow with a single week of research — and walk into a buyer conversation with documented evidence of what you tested and what you fixed.

When to use qualitative testing in your product design process

If you're like most climate tech and deep tech founders, you're investing in user research too late. By the time your product has been built, shipped, and instrumented, fixing a fundamental flow issue costs significantly more than it would have during the design phase.

Qualitative testing delivers maximum value during the early discovery phase when design decisions are still flexible and before development costs mount. A 2020 benchmark study found that 63% of UX teams conduct research during discovery to uncover user needs and inform requirements, an approach consistently linked to better product outcomes.

Specific scenarios where qualitative testing is essential:

- Exploring new markets or user segments before building features, particularly in industries like utilities or heavy manufacturing where workflows are deeply embedded

- Validating whether your team's assumptions about users match how those users actually operate

- Diagnosing why users are slow to reach value or why activation rates underperform

- Evaluating prototypes before committing engineering resources to development

- Identifying unmet needs and workflow gaps that usage analytics cannot reveal

During redesign phases

Qualitative testing helps you understand why existing features underperform rather than guessing at solutions. The Nielsen Norman Group's homepage redesign used iterative testing with wireframes and high-fidelity mockups, allowing the team to explore multiple design directions and make live improvements during sessions based on real user feedback.

For climate tech and sustainability products:

Qualitative testing is particularly useful for surfacing user motivations around environmental data, reporting workflows, and behavior change. IBM's "Design for Sustainability" initiative is a documented example: qualitative insights revealed that clients were specifically asking about SaaS product emissions and development processes. That feedback led IBM to embed sustainability checklists directly into their design review gates, treating sustainability as a strategic differentiator rather than an afterthought in the product experience.

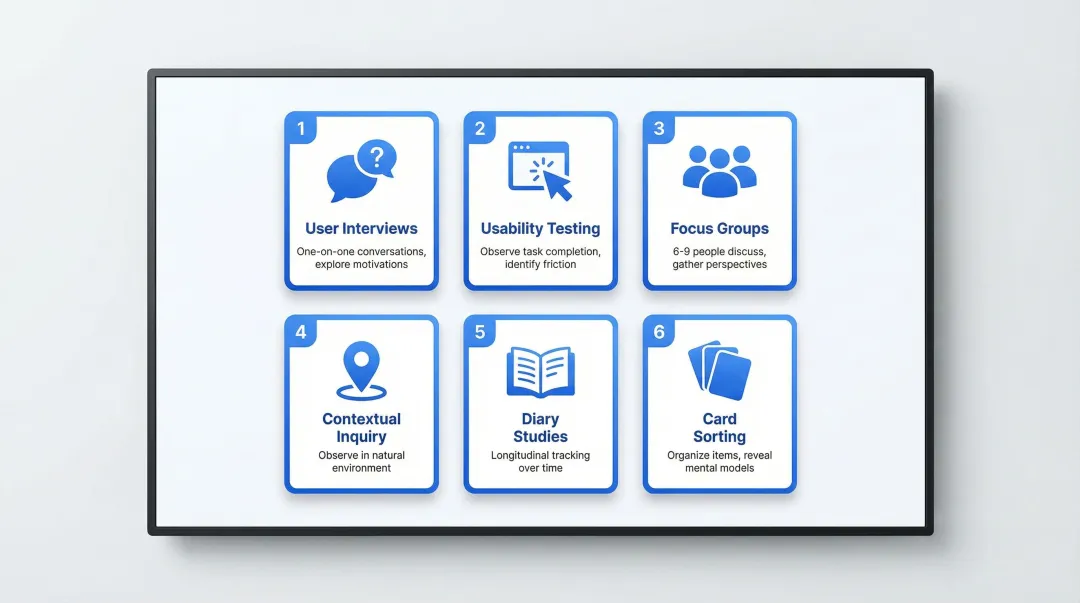

6 essential qualitative testing methods for product designers

User interviews

User interviews involve one-on-one conversations where researchers ask open-ended questions to understand users' experiences, workflows, and challenges. This method generates qualitative data that analytics and surveys are structurally unable to capture.

For climate tech and deep tech products, user interviews are especially valuable when entering markets with specialized workflows, such as grid operators managing asset portfolios or procurement teams evaluating carbon accounting platforms. These users have deeply embedded processes that researchers need to understand before any design decisions are made. Getting this wrong doesn't just create UX friction; it creates a product experience gap that slows adoption and feeds support overhead. Take a realistic example: your team assumes grid operators will self-configure alert thresholds, but interviews reveal they expect pre-configured defaults that map to their existing SCADA setup. You catch that assumption before the pilot launch — rather than discovering the mismatch live, in front of the buyer's team.

When to use: Use interviews during early discovery when you need to build real knowledge of user needs, mental models, and decision-making processes — particularly the organizational constraints, including approval chains, that shape how buying decisions get made. They're also the right choice when you're exploring sensitive topics where users need a private, conversational setting to share honestly.

Best practices: Focus on past behavior — "Tell me about the last time you..." generates more honest responses than hypothetical questions. Use open-ended questions starting with "how," "why," and "what" to avoid leading participants. Prepare a semi-structured guide with core questions and planned follow-ups, but let the conversation breathe.

Usability testing

Once you understand user needs through interviews, usability testing assesses how well your design actually meets those needs. Usability testing involves observing participants perform assigned tasks on a design while researchers identify where the experience breaks down and why.

Climate and energy software often involves complex data visualizations, multi-step configuration flows, or integration-heavy dashboards. Usability testing on these surfaces regularly reveals assumptions the product team carried forward from internal use, which rarely map to how external operators or buyers actually work. The gap between what a team expects users to do and what users actually do is where time-to-value problems originate. To make this concrete: if the operations lead at a prospective enterprise client attempts your core configuration flow during a product trial and can't reach value in their first session, the deal often stalls — not because the technology failed, but because the product experience didn't meet expectations. Usability testing on that flow before the trial begins is what prevents that outcome.

When to use:

- Assessing prototypes at any fidelity, from paper sketches to interactive mockups, before committing to development

- Testing specific flows to identify activation friction and validate design decisions

- Evaluating an existing product to diagnose drop-off or underperformance in key paths

Best practices:

- Use think-aloud protocol where participants verbalize their thoughts in real-time

- Design realistic tasks that reflect actual user activities, not simplified instructions

- Focus on formative insights that inform specific improvements rather than collecting summary metrics

Focus groups

Focus groups bring together 6-9 people for a facilitated discussion about a product, concept, or problem space. They reveal vocabulary, attitudes, and initial reactions, but they are not a substitute for behavioral research and should not be used as a proxy for usability testing.

The key limitation is groupthink. Participants influence each other, and socially desirable answers tend to surface over honest ones. What focus groups reveal well is how people talk about a problem space, which informs messaging and concept framing. What they don't reveal is how people actually behave. Where focus groups earn their place commercially is when you're entering a new vertical and need to understand the vocabulary procurement teams use to describe the problem you solve. That language shapes how you write your pitch, your evaluation criteria response, and your interface copy — before you're in the room with a buyer.

When to use: Focus groups work best during early discovery to gauge initial impressions and understand the vocabulary your users naturally use to describe a problem — language that directly shapes how you write interface copy and messaging. They're also useful for gathering reactions to concepts and surfacing how different stakeholder perspectives diverge.

Pair with behavioral methods. The most reliable approach uses focus groups for language and attitudinal data, then validates behavioral assumptions through usability testing or contextual inquiry.

Contextual inquiry / field studies

Contextual inquiry is an ethnographic method where researchers observe and interview users in their actual working environment as they perform real tasks. The researcher operates as an apprentice, learning from the user rather than directing the session.

For hardware-adjacent climate products or software used in industrial settings, field studies surface constraints that never appear in lab testing: connectivity issues, the role of non-technical staff in a workflow, physical environment limitations, and the informal workarounds teams have built around gaps in current tools. These are precisely the details that shape whether a product gets embedded into daily operations or remains an add-on that users route around. In one industrial monitoring context, a field study revealed that operators were maintaining parallel paper logs because they didn't trust the software's data freshness display. Resolving that before the account renewal conversation kept the contract — a finding that would never have surfaced in a lab session.

When to use: Contextual inquiry is the right method when you're working with complex operational systems where context is critical to the design problem — particularly when environmental factors like interruptions, tool-switching, or physical constraints are likely to shape how your product gets used. It's also the best method for discovering the unexpected use cases and workarounds that users simply won't surface in an interview setting.

Best practices:

- Follow the master-apprentice model: the user leads, the researcher learns

- Structure sessions in four parts: Primer, Transition, Contextual Interview, and Wrap-up

- Stay anchored to context, partnership, and interpretation rather than defaulting to a scripted interview format

Diary studies

Diary studies are longitudinal methods where participants record their activities, experiences, and observations over days or weeks. They capture behavior patterns and evolving reactions that a single research session cannot replicate.

For products with longer user journeys, like monitoring platforms used across billing cycles or reporting tools tied to quarterly workflows, diary studies reveal how usage habits form, where engagement drops, and what triggers users to seek help or abandon a task. This maps directly to onboarding friction and feature adoption gaps that don't show up in session-based research. For a platform tied to quarterly reporting cycles, diary data might reveal that your export flow breaks down when users are switching between systems under deadline pressure — the kind of specific, timed friction that, once resolved, directly improves pilot-to-contract conversion rates.

When to use: Diary studies work best when you need to understand how user behavior changes over time and across different contexts, particularly for mapping customer journeys that span multiple days, systems, or decision points. They're also the only method that reliably captures spontaneous moments of frustration or confusion — the kind users rarely remember by the time a formal interview takes place.

Best practices:

- Use a mix of event-based (triggered by a specific action), interval-based (at set times), and signal-based (prompted by the researcher) entry types

- Balance participant effort: combine structured questions with open-ended and multimedia prompts

- Always run a pilot before full launch to test instructions, tools, and the overall diary cadence

Card sorting

Card sorting asks users to organize items into groups and label the categories, surfacing how they naturally structure information. This method is most useful for designing navigation and information architecture that matches how users actually think, not how the product team has organized the system internally. When an enterprise buyer evaluates your platform during a trial, navigation that doesn't map to their mental model creates immediate doubt — not about UX, but about product fit. Card sorting is how you design navigation that works for the buyer's frame of reference before that evaluation moment arrives.

When to use:

- Designing or restructuring navigation for content-heavy platforms, dashboards, or multi-feature tools

- Validating whether your current information architecture aligns with user mental models

- Understanding how users categorize services, data types, or product offerings

Best practices: Recruit at least 15 participants to see consistent patterns across mental models. Run open sorts first — where users create their own categories — to discover natural groupings. Follow with closed sorts, where users organize into predefined categories, to validate existing structures.

Best practices for conducting effective qualitative testing

Recruiting the right participants

Research quality depends entirely on recruiting participants who represent your actual target users, not approximations of them.

Screen candidates carefully using screener surveys that identify the specific behaviors, roles, and contexts that match your audience.

Key recruitment principles:

- Exclude UX professionals and personal contacts: they provide feedback shaped by design awareness or familiarity with you, neither of which reflects real user behavior

- Build in diversity and inclusion requirements from the start so your findings reflect how the product performs across different users, not just one segment

- Recruit 5-8 users for most qualitative studies, or 3-4 from each distinct user group if your product serves multiple personas

The commercial stakes of recruiting wrong are direct: if you test with approximations of your actual users, you'll optimize for an experience that fails its first enterprise evaluation. Getting recruitment right means your research findings hold up under buyer scrutiny — not just internal review.

Crafting effective open-ended questions

The quality of qualitative research depends heavily on what you ask and how you ask it. Questions focused on past behavior rather than opinions or future intent generate more reliable data because people describe what they've actually done rather than what they think they would do.

Example questions that work:

- "Walk me through a typical day for you."

- "Tell me about the last time you [performed this task]."

- "How did you feel during this experience?"

- "What challenges did you run into when trying to accomplish this?"

Keep questions neutral and avoid any framing that implies a correct answer. The goal is to hear how users actually think, not to confirm what you already believe. There's also a direct commercial return here: the language users use to describe their problems in discovery interviews is often the language that resonates in sales conversations. The more precisely you understand how your users frame the problem, the more precisely you can frame your solution to buyers.

Observation skills: beyond what users say

What users say during a session and what they actually experience are often different. Non-verbal cues are where the real signal lives. Moments of hesitation, audible sounds of uncertainty, long pauses before clicking, and visible frustration often indicate usability issues even when users explicitly say everything is fine.

What to observe:

- Where users look first on a screen and what they skip over

- How long they pause before taking an action

- Facial expressions or body language indicating confusion or relief

- Workarounds users create to complete tasks the "wrong" way

When you notice hesitation, probe directly: "I noticed you paused there. What were you thinking at that point?" This is often where the most actionable insights surface. Every hesitation you catch and resolve in testing is one less friction point in an enterprise evaluation — the moment when buyers are assessing whether the product meets their requirements and whether they can justify the investment internally.

Analyzing and synthesizing findings

Raw observations become useful only after systematic analysis. The process of moving from notes and recordings to design decisions is where research either creates clarity or collapses into a stack of unread reports.

Transform your findings into actionable recommendations through structured analysis:

- Watch recordings, read transcripts, and compile all session notes before drawing conclusions

- Look for patterns across participants, not just memorable moments from a single session

- Group findings into meaningful categories such as navigation issues, trust concerns, or workflow mismatches

- Rank issues by severity and frequency: critical, major, or minor

- Translate each observation into a specific design question or recommendation

Affinity mapping is one of the most effective collaborative techniques here: grouping similar findings on sticky notes or a digital board lets the whole team see patterns across participants at once. This shared synthesis process builds alignment on priorities faster than a research readout ever will. For a team preparing for enterprise pilots or a funding conversation, a prioritized list of evidence-backed design changes also signals product maturity to buyers and investors — it shows you've tested, learned, and acted on what you found.

The pattern across all six methods is consistent: qualitative research replaces assumptions with observations. For products where onboarding complexity, trust signals, or workflow mismatches are real barriers to adoption, that shift makes a measurable difference in activation rates, time-to-value, and reduced support burden. You don't need to run every method. You need to run the right ones, with the right participants, at the right stage.

If your research is surfacing gaps between how users behave and how your product is designed, that's often a signal that the product experience hasn't scaled with the product itself. What if Design works with climate tech and deep tech product teams to translate research findings into design decisions that reduce friction, improve activation, and support faster time-to-value across complex workflows.

Frequently asked questions

What is qualitative testing?

Qualitative testing is a research method that collects subjective, non-numerical data about user experiences to understand the "why" behind behavior. It focuses on observations, direct quotes, and behavioral patterns rather than aggregate metrics.

What methods are used in product design testing?

Common methods include user interviews, usability testing, focus groups, contextual inquiry, and diary studies. You'll typically combine multiple approaches to build a complete picture of user needs, since each method surfaces different types of data.

Is product testing qualitative or quantitative?

Effective product testing uses both. Qualitative research reveals why users behave in certain ways and what would need to change to improve the experience. Quantitative research measures how many users are affected and validates whether proposed solutions work at scale.

What are the main types of qualitative research designs?

The primary types are exploratory research (discovering user needs before design decisions are made), evaluative research (assessing whether a design is working), and generative research (developing new concepts based on observed user behavior). Each serves a different stage of the design and product development process.