Why secondary research is your edge in UX design

You're six months into building a product for grid operators, carbon markets, or industrial buyers. Your founding team has real domain expertise. You understand the regulatory environment, the buyer persona, the technical constraints. What you don't have is a clear picture of how similar users actually behave with comparable tools — and your next sprint starts Monday.

This is where most technical teams either skip the research phase entirely or commission studies that take weeks and cost more than the problem warrants. Neither outcome serves you well. Secondary research — the practice of analyzing existing data before generating new — closes that gap without those tradeoffs. It's not just an efficiency play; it's what separates teams that design with informed assumptions from teams that discover expensive misalignments in usability testing.

This guide covers what secondary research actually involves in a UX context, when it matters most, and how to run it without falling into the common traps that waste the time it's supposed to save.

TLDR:

- Analyzes existing data rather than collecting new data, cutting research time significantly

- Run it first to identify gaps, refine questions, and focus your primary research investment

- Combine internal sources (analytics, past studies) with external sources (academic papers, industry reports)

- Always validate secondary findings with targeted primary research for your specific context

- Free resources (NN/g, Google Scholar, ACM Digital Library) provide quality insights

What is secondary UX research?

Secondary research is the practice of gathering and analyzing existing data to inform design decisions. Rather than conducting new studies, you're synthesizing findings that already exist — from industry reports and academic papers to your organization's past research and analytics data. Think of it like reviewing prior technical assessments before specifying a new system: you don't start from zero when there's already relevant data available. According to Nielsen Norman Group, secondary research should be a standard first step in rigorous research practices, helping teams identify what's already known before investing resources in primary data collection.

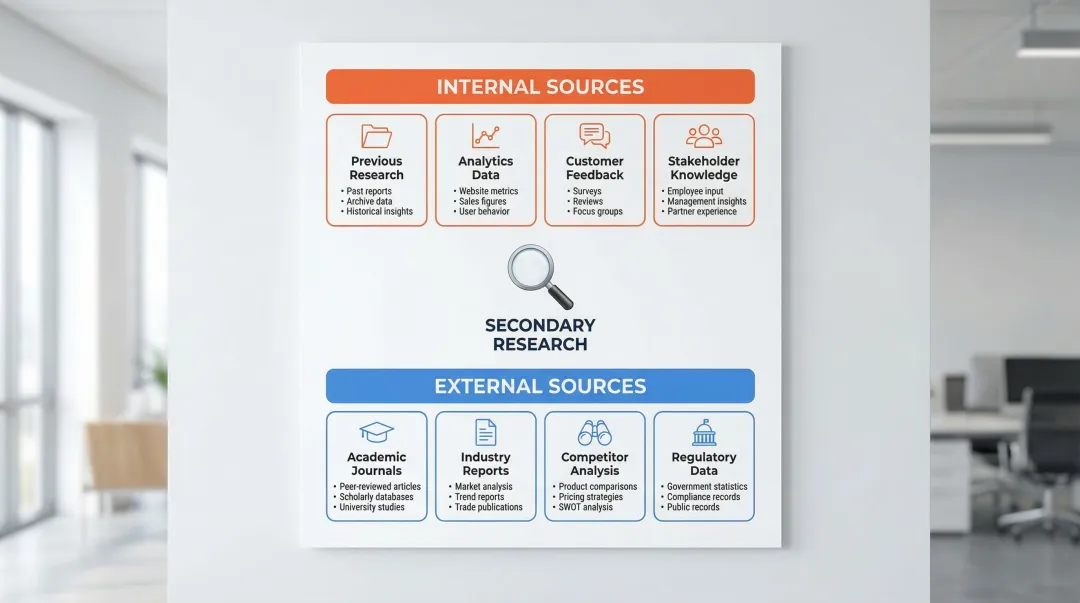

Internal vs external sources

Secondary research draws from two distinct categories:

Internal sources:

- Past user research (interviews, usability tests, surveys)

- Analytics and behavioral data (website metrics, heatmaps, user flows)

- Customer feedback channels (support tickets, reviews, NPS surveys)

- Stakeholder knowledge (product managers, sales teams, customer success)

- Research repositories and documentation

External sources:

- Academic journals (Google Scholar, ResearchGate, ACM Digital Library)

- Industry reports (Forrester, Gartner, Nielsen Norman Group)

- Competitor analysis (websites, app reviews, user flows)

- UX research databases (NN/g articles, Baymard Institute)

- Government and regulatory data (census information, accessibility guidelines)

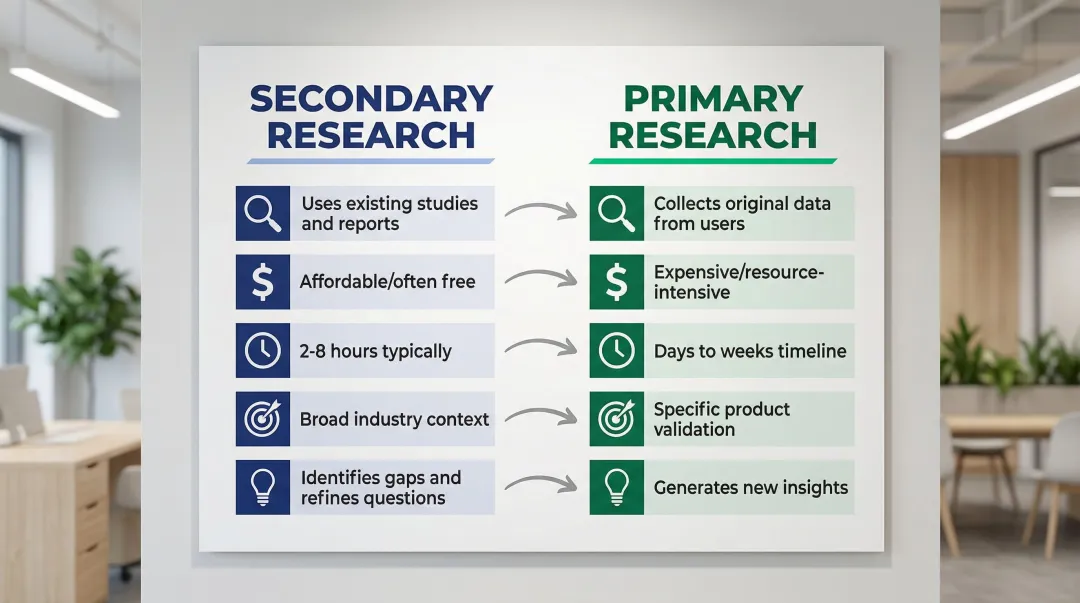

How it differs from primary research

Here's how they compare:

| Aspect | Secondary research | Primary research |

|---|---|---|

| Data source | Existing studies and reports | Original data from users |

| Cost | Affordable, often free | Expensive, resource-intensive |

| Timeline | 2-8 hours typically | Days to weeks |

| Scope | Broad industry context | Specific product validation |

| Purpose | Identify gaps, refine questions | Generate new insights |

Secondary and primary research work together, not as replacements. The difference comes down to data synthesis versus data generation. Secondary research lays the foundation that makes primary research more targeted and efficient.

When and why to conduct secondary research

Strategic timing

Secondary research delivers maximum value during specific project phases:

At project kickoff: Start here before any primary research. It reveals what's already known, preventing redundant studies and focusing your investigation on genuine knowledge gaps.

When exploring new problem spaces: Entering unfamiliar territory? Desk research builds contextual understanding quickly, helping you speak the industry's language without starting from scratch. For teams working across multiple climate tech verticals — carbon capture, grid modernization, clean energy infrastructure — this is particularly relevant because each sub-sector has its own user behaviors, regulatory context, and adoption curve.

Before entering unfamiliar markets: Designing for a sector or region you haven't worked in before requires understanding regulatory environments, procurement processes, and user behaviors specific to that context. This provides the foundation before you invest in local recruitment or interviews.

When resources are constrained: Limited budget or tight timeline? Desk research is faster and more cost-effective than primary research, and it helps you direct spending toward the primary research questions that actually need original data.

Understanding when to deploy secondary research is only half the equation. The real value comes from knowing what it actually does for your team — and where its limits are.

Why secondary research matters for design teams

Building contextual understanding without losing weeks to onboarding: For product teams building in carbon capture, grid modernization, or EV infrastructure, secondary research matters more than in most software categories. The domain complexity is real, the regulatory context shifts frequently, and the users — often operators, engineers, or procurement leads — have very specific mental models. It helps you map those models before investing in interviews, so you're asking sharper questions from day one.

Refining research questions and scope: Broad questions like "How do users feel about our product?" become focused, actionable objectives like "What specific barriers prevent operations managers from completing onboarding without IT support?" This narrows your investigation to what truly matters — and this is where it saves the most time.

Identifying knowledge gaps strategically: Discover what's already known versus what needs original investigation. In one documented case, researchers reviewed 2,250 unique user studies across 1,662 manuscripts to understand participant compensation practices — which means teams asking the same question today don't need to generate new primary data. The answer already exists.

Validating or challenging assumptions: Test hypotheses against existing data before investing in expensive studies. If your assumption is that enterprise users want more features, industry research might reveal they consistently prioritize reliability and integration simplicity over feature breadth. Finding that through secondary research is far cheaper than finding it through a failed launch.

Finding relevant statistics and benchmarks: Support design decisions with credible data. Baymard Institute's research shows that large e-commerce sites can increase conversion rates by 35.26% by addressing documented usability issues — that kind of benchmark gives you an evidence base for stakeholder conversations without running a study from scratch.

Types and sources of secondary research

This form of research draws from two major categories: internal sources within your organization and external sources from the broader research community. Knowing what's available in each category helps you build a comprehensive research foundation before conducting primary studies.

Internal research sources

Previous user research: Your organization's past interviews, usability tests, surveys, and diary studies contain valuable insights that often go unmined. Many teams rediscover problems they've already solved simply because previous research wasn't properly documented or shared across the team.

Analytics and behavioral data includes website and app analytics (traffic patterns, conversion rates), heatmaps showing where users click and scroll, user flow data revealing navigation patterns, A/B test results from previous experiments, and conversion metrics and drop-off points.

Customer feedback channels include support tickets revealing common pain points, customer service logs documenting recurring issues, product reviews on app stores and review sites, social media comments and mentions, and NPS survey responses with open-ended feedback.

Stakeholder interviews and documentation draw on product managers and sales teams with direct user contact, customer success managers documenting user needs, project briefs and strategy documents, and competitive analyses from marketing or strategy teams.

External research sources

Academic and industry knowledge

Academic and scholarly sources:

- Google Scholar: Broad access to peer-reviewed papers

- ResearchGate: Platform for accessing scientific research

- ACM Digital Library: As of January 1, 2026, the Basic version is freely accessible to the public, opening access to high-quality HCI research

Industry reports and market research:

- Nielsen Norman Group research and guidelines

- Baymard Institute — free plan available with 50 guidelines and articles

- Forrester and Gartner industry reports

- Market research from specialized firms

UX research databases and publications worth bookmarking include NN/g articles and videos, UX Collective on Medium, Boxes and Arrows, and UX Matters.

Competitive and market intelligence

Competitor analysis spans competitor websites and user flows, app store reviews revealing user frustrations, Product Hunt comments and discussions, and feature comparisons and positioning analysis.

Regulatory and public data sources

Government and standards organizations provide census data for demographic insights, WCAG accessibility guidelines, industry-specific regulatory reports, and public health and safety data.

Climate tech and sustainability sources include CleanTechnica and GreenBiz for industry trends, climate startup databases tracking emerging solutions, corporate sustainability reports from established companies, regulatory reports on emissions standards and climate policy, and industry analyses of adoption barriers and market dynamics.

Step-by-step process for conducting secondary research

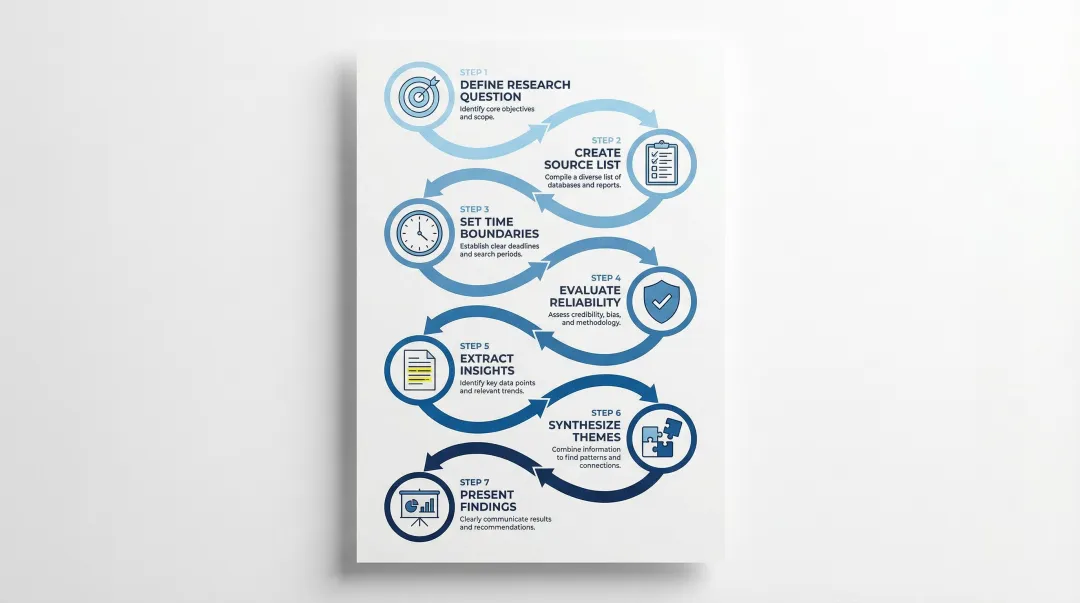

Planning your research

1. Define your research question clearly

Start with a specific problem statement. Instead of "research our users," ask "What barriers prevent enterprise buyers from completing our onboarding process without IT support?" Identify whether you need exploratory research (understanding a new space) or confirmatory research (validating specific hypotheses). The answer shapes which sources you prioritize.

2. Create a prioritized source list

Build your source list strategically, starting with internal sources (faster, more directly relevant to your product) before external ones.

Prioritize based on relevance to your specific question, quality and credibility, and accessibility and review time.

3. Set time boundaries

Allocate 2-4 hours for initial research sweeps to avoid getting lost in tangents. For deeper investigations, cap yourself at one full day. Systematically work through your source list rather than following every interesting reference you encounter.

Evaluating and extracting data

4. Evaluate source reliability

Once you've gathered sources, assess each one carefully using these criteria:

- Publication date: Prioritize sources within 2-3 years for digital products

- Author credentials: Verify they are recognized experts in the relevant field

- Methodology transparency: Confirm they explain how data was collected

- Potential bias: Check funding sources and commercial interests

- Sample size: Ensure the study population is comparable to your users

5. Extract and document key insights

Select 3-5 sources that directly address your research questions. For each one, note key findings in your own words, record the exact citation and URL, flag limitations or contextual differences, and identify supporting statistics with their sources.

Synthesizing and sharing

6. Synthesize findings into themes

Look for patterns across sources. Group related insights, identify contradictions, and note where multiple independent sources confirm the same finding — that convergence is where you can build confidence.

Create a summary document that captures major themes and patterns, knowledge gaps that remain unresolved, contradictions requiring further investigation, and recommended next steps.

7. Present findings to stakeholders

Share your synthesis with clear insights, identified knowledge gaps, and recommended primary research approaches to fill those gaps. Be explicit about which findings come from contexts that differ from yours, and which conclusions need validation for your specific product and user base.

Challenges and best practices for secondary research

Common challenges

| Challenge | Impact on research |

|---|---|

| Outdated information | In fast-moving tech industries, information ages quickly. Research from three years ago about mobile behavior may no longer reflect current patterns, especially in sectors where regulatory changes or market shifts have reshaped how users interact with tools. |

| Data collected for different purposes | A study about e-commerce checkout designed for young adults may not generalize to enterprise software for industrial buyers. Context matters more than the headline finding. |

| Lack of control over quality | You can't verify the methodology of external studies. Some research is actually opinion positioned as data, particularly in blog posts and vendor-produced content. |

| Source bias | Industry reports from vendors often have commercial interests. Research funded by companies may emphasize findings that support their products or market positions. |

| Difficulty determining reliability | With the volume of online content, distinguishing peer-reviewed research from opinion-based articles requires deliberate scrutiny. |

| Information overload | Too many sources lead to situations where you spend more time reading than synthesizing, which defeats the purpose of the exercise. |

Best practices to overcome challenges

Understanding these challenges is useful. Addressing them requires a systematic approach to how you evaluate and use secondary sources.

Prioritize recent sources: Always check publication dates. For digital products, prioritize sources within 2-3 years. Slower-moving industries may allow 3-5 years, but anything older needs strong justification for inclusion.

Cross-reference findings: Never rely on a single source. When multiple independent sources confirm the same finding, your confidence in that finding increases meaningfully. One source is a data point; three is a pattern.

Be transparent about limitations: When presenting findings to stakeholders, clearly state which insights come from contexts that differ from yours and which require validation through primary research. This protects your credibility and sets appropriate expectations.

Document sources and evaluation criteria: Maintain a clear record of which sources you used and why you judged them reliable. This documentation helps future team members build on your work rather than repeating it.

Focus on synthesis, not collection: Your goal is insight, not comprehensiveness. Stop collecting once you can answer your research question and identify the specific gaps that require primary investigation.

Always plan follow-up primary research: Treat secondary findings as informed hypotheses, not confirmed conclusions. Secondary research is not a substitute for primary research — it refines your questions and makes primary research more focused and efficient.

For climate tech and deep tech teams navigating complex user needs, the balance between desk research and primary validation is where limited research budgets have the greatest impact. At What if Design, we build secondary research into the early phases of UX engagements for climate and deep tech clients specifically because domain complexity in these sectors means the gap between assumed user behavior and actual user behavior can be significant — and closing that gap early changes the quality of every design decision that follows.

If your product experience hasn't been revisited since your last sprint cycle, it may be worth assessing where secondary research could sharpen your next round of primary studies before you start recruiting.

Frequently asked questions

What is primary and secondary research in design?

Primary research involves collecting original data through interviews, usability tests, and surveys. Secondary research analyzes existing data from past studies, industry reports, and competitor analysis. Both are necessary — secondary research tells you what's already known, while primary research fills the gaps specific to your product and users.

What is secondary research in UX design?

Secondary research gathers and analyzes existing data from internal or external sources to inform design decisions without conducting new studies. It provides context and refines questions before primary research, helping teams focus their effort on what genuinely needs original investigation.

How long does secondary research typically take?

Focused secondary research usually takes 2-8 hours depending on scope. Initial research sweeps take 2-4 hours to gather key insights and identify major themes. Deeper investigations requiring comprehensive literature reviews may require one to two full days.

Can secondary research replace primary research?

No. Secondary research rarely replaces primary research entirely. It provides broad context and identifies patterns, but cannot validate specific designs or uncover behaviors unique to your product and user base. Use it to refine questions and scope, which makes primary research more focused and efficient when you do run it.

What are the best free sources for UX secondary research?

Top free resources include Nielsen Norman Group articles and videos, Google Scholar for academic papers, ACM Digital Library Basic (free as of 2026), Baymard Institute's free plan, competitor analysis through websites and app reviews, your own internal analytics, user reviews, and government databases.

How do I know if secondary research is reliable?

Check publication dates, verify author credentials, and review methodology transparency. Reliable findings are typically confirmed by multiple independent sources. Peer-reviewed studies are more trustworthy than blog posts or vendor-produced reports, though both have their place depending on what you're investigating.